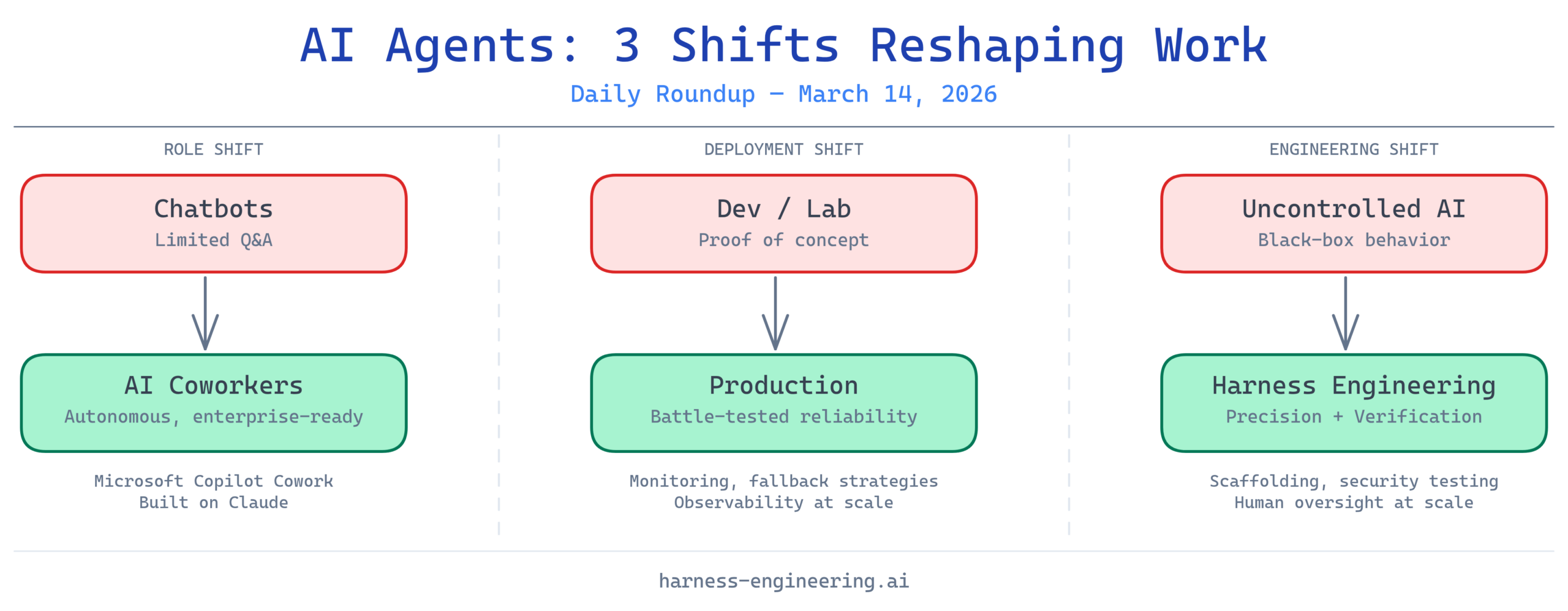

The AI agent landscape continues to accelerate at a breakneck pace. As these systems transition from experimental proof-of-concepts to genuine workplace productivity tools, the engineering community is grappling with critical questions: How do we deploy them reliably? How do we secure them? And how do we manage the cognitive load of orchestrating multiple agents simultaneously? Today’s roundup explores the practical realities of the AI agent era, from lessons learned in production environments to the emerging field of harness engineering—a discipline focused on supervising and controlling AI systems with precision and verification.

1. Lessons From Building and Deploying AI Agents to Production

Real-world deployments reveal hard truths that laboratory experiments don’t capture. This video distills practical lessons from teams who have already navigated the minefield of bringing AI agents into production, covering everything from architecture decisions to failure modes and recovery strategies. For engineers considering their first production AI agent deployment, these battle-tested insights provide an invaluable roadmap that can save months of painful learning curves.

The transition from development to production requires more than scaling up—it demands a fundamentally different approach to reliability, observability, and graceful degradation. Teams sharing their experiences emphasize the importance of robust monitoring, clear fallback mechanisms, and the need to establish trust with stakeholders who may be skeptical of AI-driven automation. These lessons underscore why harness engineering has become essential: without proper oversight mechanisms, even well-intentioned AI agents can cause unexpected downstream damage.

2. Test Your AI Agents Like a Hacker – Automated Prompt Injection Attacks

As AI agents gain agency and access to critical systems, adversarial testing becomes a non-negotiable requirement. This exploration of automated prompt injection attacks reveals vulnerabilities that most teams haven’t even considered, let alone defended against. The ability to probe AI systems for weaknesses—and to do so automatically at scale—is emerging as a critical capability for security-conscious organizations.

Prompt injection attacks represent a unique class of vulnerability where seemingly innocent user inputs can be weaponized to override an AI agent’s intended behavior. Whether an attacker is trying to exfiltrate data, manipulate outputs, or hijack the agent’s reasoning process, understanding these attack vectors is fundamental to responsible AI deployment. The video’s focus on automated attacks is particularly important: manual testing won’t scale to the complexity of modern AI agents, so teams need frameworks that can systematically stress-test their systems before they reach production.

3. AI Agents Just Went From Chatbots to Coworkers

The narrative around AI has fundamentally shifted. Where we once talked about “chatbots” providing customer service, we now talk about “AI coworkers” handling substantive business logic, collaborating with humans on complex problems, and operating with genuine autonomy within defined boundaries. This conceptual upgrade reflects a real change in how organizations are deploying AI agents—not as novelties or supplements, but as core components of their workforce.

This transition carries significant implications for harness engineering. A chatbot that sometimes gives unhelpful answers is a minor annoyance; an AI coworker that makes critical decisions without proper verification is a liability. Companies like Microsoft signaling this shift with products built to handle real office work indicates that the market is moving toward agent systems that require sophisticated supervision mechanisms. The stakes are higher, and so is the demand for precision in how we oversee these systems.

4. How I Eliminated Context-Switch Fatigue When Working With Multiple AI Agents in Parallel

For teams operating multiple AI agents simultaneously, context switching becomes a genuine source of friction and cognitive fatigue. This discussion from the AI agents community addresses a practical pain point: managing the mental overhead of juggling different agents, each with its own state, configuration, and output patterns. The solutions shared reveal emerging best practices around agent orchestration and unified interfaces.

The problem highlights why harness engineering extends beyond just security and reliability—it also encompasses usability and cognitive ergonomics. When humans need to supervise multiple agents, the interface through which they do so dramatically affects their ability to catch errors, understand intent, and intervene effectively. Poorly designed oversight mechanisms create blind spots; well-designed ones amplify human judgment by presenting information clearly and enabling quick, informed decisions across all active agents.

5. Microsoft Just Launched an AI That Does Your Office Work for You — and It’s Built on Anthropic’s Claude

Microsoft’s introduction of Copilot Cowork marks a watershed moment in enterprise AI deployment. By building on Claude’s proven capabilities and integrating AI agents directly into the office software suite millions of workers use daily, Microsoft is demonstrating that the market is ready for AI systems that handle real work—not hypothetical scenarios or toy problems. The choice to build on Anthropic’s Claude signals confidence in the model’s reliability and safety characteristics.

This launch validates the market thesis that AI agents will become embedded in everyday workflows, which immediately elevates the importance of harness engineering. When AI is handling financial reports, project management, and internal communications at enterprise scale, the cost of a single mistake can be substantial. Organizations deploying these tools need comprehensive frameworks for verification, auditing, and human oversight—exactly what harness engineering provides.

6. Building AI Coding Agents for the Terminal: Scaffolding, Harness, Context Engineering, and Deployment

For developers, AI coding agents represent both tremendous opportunity and significant risk. This technical deep-dive explores how to architect these agents with proper scaffolding, harness mechanisms, and context engineering to enable them to write reliable code without introducing vulnerabilities or maintainability nightmares. The terminal environment—where agents can directly execute commands—adds particular risk, making harness engineering not just important but essential.

The focus on scaffolding and context engineering reflects a maturing discipline: rather than simply unleashing a large language model on a codebase, sophisticated teams build structured interfaces that guide agent behavior toward safer, more predictable outcomes. This might include constraints on what files can be modified, requirements for generating tests alongside code, or mechanisms to prevent agents from executing commands without human approval. These structures—the “harness”—are what separate responsible AI deployment from reckless automation.

7. Harness Engineering: Supervising AI Through Precision and Verification

This is where the rubber meets the road. As AI systems become more capable and more widely deployed, the ability to supervise them—to verify their outputs, catch their errors, and maintain human control over outcomes that matter—becomes a core engineering discipline. Harness engineering is the systematic study of how to build these oversight mechanisms: the scaffolding, verification systems, and control structures that allow humans to confidently delegate work to AI agents.

Precision and verification are the watchwords here. Rather than hoping an AI agent will behave correctly, harness engineering asks: How can we ensure it behaves correctly? What guarantees can we build into the system? Where do we need human judgment, and how do we make it easy for humans to provide that judgment at scale? These questions define the cutting edge of AI operations, and they’re increasingly critical as the stakes rise and the complexity of AI systems grows.

8. AI Agents: Skill & Harness Engineering Secrets REVEALED! #shorts

Even in a short-form video format, the interplay between skill engineering (making AI agents better at their intended tasks) and harness engineering (ensuring they do only their intended tasks reliably) emerges as a central theme. Both capabilities are necessary, and neither is sufficient on its own. An AI agent with tremendous skill but no harness is unpredictable; an agent with heavy harness but limited skill is uselessly constrained.

The framing of harness engineering as a “secret” worth revealing suggests that many teams are still underestimating its importance. As AI agents proliferate, organizations that invest early in harness engineering—in building the verification, supervision, and control mechanisms their agents need—will gain a competitive advantage in reliability and trustworthiness. This isn’t sexy work, but it’s foundational to the responsible scaling of AI in the enterprise.

Takeaway: The Era of Supervised AI Agents

The convergence of these stories points to a clear evolution: AI agents are graduating from experimental technology to operational systems that handle real work. With that transition comes new responsibilities. Teams deploying agents in production must learn from those who came before, security researchers must stay ahead of evolving attack vectors, and engineers must master the discipline of harness engineering—the art and science of supervising AI systems with precision.

The good news is that this discipline is maturing. Frameworks for testing, deployment patterns from production teams, and emerging best practices around agent orchestration and verification are all becoming available. The challenge for teams building with AI agents today is to absorb these lessons early, invest in proper oversight mechanisms, and recognize that the difference between a powerful tool and a risky system often comes down to the quality of the harness.

What trends are you watching in AI agent deployments? Share your experiences in the comments—what lessons have you learned about harness engineering in your own work?