The AI agent landscape is evolving rapidly, and today’s news cycle reflects a critical inflection point: AI agents are transitioning from experimental tools to production-grade components of enterprise workflows. What’s particularly striking is how much attention is being paid to the engineering practices that make this transition possible—specifically harness engineering, context management, and security considerations. Here’s what you need to know.

1. Lessons From Building and Deploying AI Agents to Production

Source: YouTube

The journey from prototype to production for AI agents is rarely straightforward, and this discussion captures the hard-won lessons from teams who’ve made the transition successfully. The key takeaway is that production deployment requires more than just functional code—it demands rigorous testing, careful monitoring, and deep understanding of how agents behave at scale. Practitioners emphasize that context engineering and proper harness design are non-negotiable prerequisites, not nice-to-have optimizations.

The significance here is that production lessons are being documented and shared systematically. Teams are moving beyond “it works in demo” to asking the right questions: How do we ensure consistent behavior? How do we catch failure modes before they reach users? How do we maintain agent performance as complexity increases? These aren’t hypothetical questions anymore—they’re blocking large-scale AI agent adoption.

2. Test Your AI Agents Like a Hacker – Automated Prompt Injection Attacks

Source: YouTube

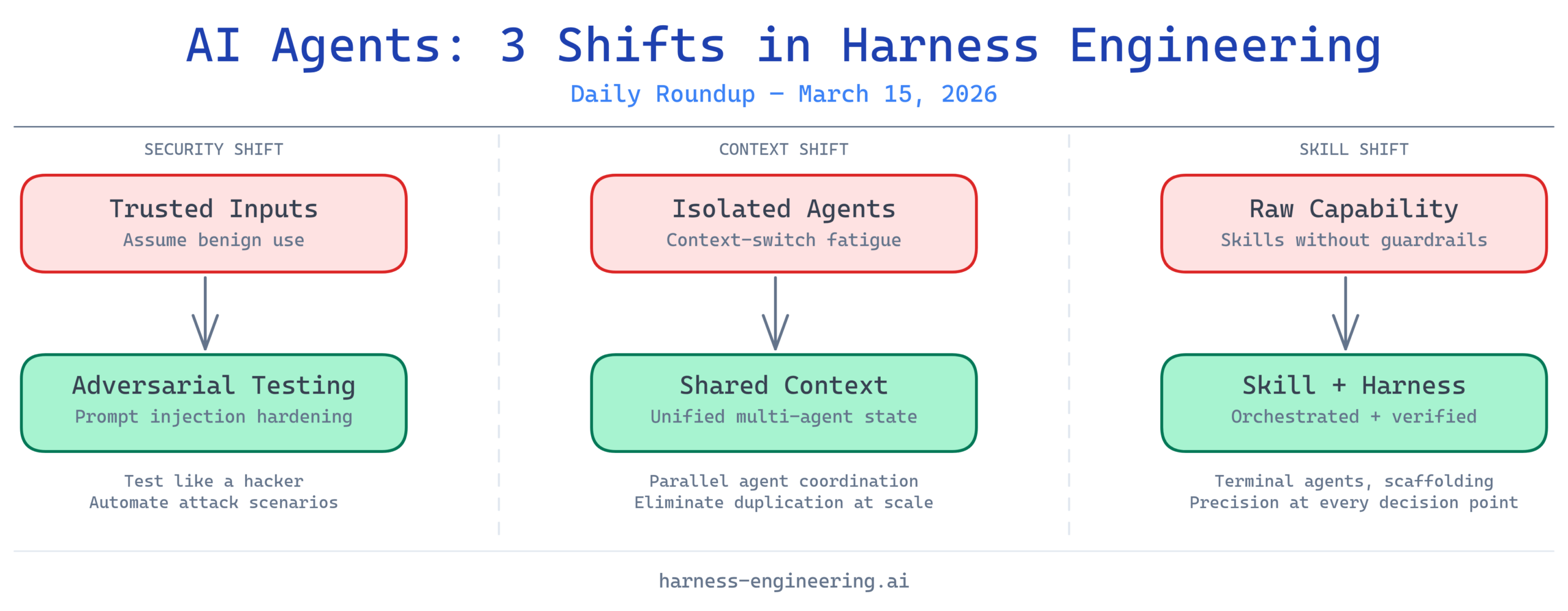

Security vulnerabilities in AI agents are no longer theoretical concerns—they’re active threats that enterprises must account for. Prompt injection attacks represent one of the most dangerous vectors, and this coverage of automated testing for these vulnerabilities is timely and essential. Organizations deploying AI agents need adversarial testing frameworks that simulate realistic attack scenarios to understand where their harnesses are weak.

The critical insight is that security-hardened harnesses aren’t built through wishful thinking; they require systematic, creative testing. By adopting hacker-like thinking—testing what happens when inputs are deliberately malicious—teams can identify and patch vulnerabilities before deployment. This approach treats harness design as an active security discipline, not a static specification. For organizations building agents that interact with sensitive data or systems, this is non-negotiable.

3. AI Agents Just Went From Chatbots to Coworkers

Source: YouTube

The framing here captures a fundamental shift in how AI agents are perceived and deployed. They’re no longer conversational toys—they’re autonomous workers integrated into organizational workflows. This reflects a maturation in both agent capability and deployment strategy, where the focus shifts from “what can an AI do?” to “how do we integrate AI into teams?” This transition has massive implications for harness engineering.

When agents are coworkers, the stakes change dramatically. They need to be reliable, auditable, and aligned with team processes. They need to understand context deeply, maintain consistency across interactions, and integrate cleanly with human workflows. The engineering required to make this work—proper context windows, transparent decision-making, reliable task completion—is precisely what harness engineering addresses. This news item signals that the industry is collectively realizing these capabilities are essential, not optional.

4. How I Eliminated Context-Switch Fatigue When Working with Multiple AI Agents in Parallel

Source: Reddit

Managing multiple AI agents simultaneously creates cognitive overload and operational fragmentation. This discussion addresses a real pain point: when you’re working with multiple agents, you need ways to maintain coherent context, track state across different agents, and avoid duplicating effort. The solutions shared here—likely involving shared context architectures, unified interfaces, and better state management—are directly applicable to enterprise deployments.

This is particularly relevant for organizations running specialized agents for different tasks. Instead of context constantly switching between agent instances, well-designed harnesses should support shared context layers that allow agents to understand the broader work environment. This is a practical implementation challenge that separates amateur deployments from professional ones, and it’s encouraging to see practitioners discussing concrete solutions.

5. Microsoft Just Launched an AI That Does Your Office Work for You — and It’s Built on Anthropic’s Claude

Source: Reddit

Microsoft’s launch of Copilot Cowork represents a watershed moment: major enterprises are now shipping AI agents into production workflows at scale. The fact that it’s built on Claude—a model known for advanced reasoning and instruction-following—signals confidence in using capable models with careful harness design as the primary control mechanism. This isn’t about releasing raw AI capability; it’s about shipping harness-engineered agents designed for specific workflows.

The implication for the harness engineering community is clear: production deployments of AI agents are happening now, and their success depends entirely on how well they’re harnessed. Copilot Cowork’s success won’t be determined by model capability alone—it will be judged on reliability, coherence, safety, and integration with existing office tools. Every friction point users experience is a failure of harness design, not model limitation. This puts tremendous emphasis on getting the engineering right.

6. Building AI Coding Agents for the Terminal: Scaffolding, Harness, Context Engineering, and More

Source: YouTube

AI coding agents operating in terminal environments represent a particularly demanding use case—they need to understand code context deeply, make autonomous decisions about file modifications, and operate reliably in an environment with minimal error recovery. This content directly addresses the architectural foundations required: scaffolding for structured agent operation, harness frameworks for reliable execution, and sophisticated context engineering to maintain understanding across file systems and code structures.

Terminal-based agents are an excellent real-world test of harness engineering principles because the feedback is immediate and unforgiving. If an agent misunderstands context or makes a wrong decision, the results are visible instantly. This practical laboratory is producing insights about what robust harness design actually looks like—not theoretical frameworks, but proven patterns for keeping agents aligned and effective.

7. Harness Engineering: Supervising AI Through Precision and Verification

Source: YouTube

This directly addresses the core discipline: how do we actually supervise AI systems to ensure they behave as intended? The emphasis on precision and verification reflects a mature understanding that good intentions aren’t enough—we need measurable frameworks for evaluating agent behavior, detecting drift, and catching failures before they escalate. This is harness engineering in its most essential form: building the verification infrastructure that makes AI systems trustworthy.

Precision engineering here refers to exact specification of what agents should do, explicit instrumentation of their decision-making, and detailed logging of their behavior. Verification means having automated and manual checks that confirm agents are operating within expected parameters. Together, these create the foundation for AI systems that can be deployed with confidence into production environments. As AI agents become more autonomous, this oversight infrastructure becomes more, not less, important.

8. AI Agents: Skill & Harness Engineering Secrets REVEALED! #shorts

Source: YouTube

The interplay between skill engineering and harness engineering is crucial but often misunderstood. Skills are the capabilities agents have—the tools, knowledge, and abilities they can deploy. Harness engineering is how those skills are orchestrated, constrained, and verified. Neither works without the other. Powerful agents with weak harnesses are dangerous; well-harnessed agents with limited skills are underutilized. This content (even in short-form) captures an important insight: both dimensions matter equally.

The “secrets” framing suggests there are non-obvious principles that experienced practitioners understand but aren’t widely documented. These likely include patterns around context window optimization, decision tree design, error recovery, and feedback loops. As the industry matures, making these patterns explicit and teachable will be essential for scaling AI agent adoption.

Key Takeaway

March 15, 2026 marks a clear moment of inflection in AI agent maturity. The conversation has shifted from capability to deployment, from “what can we do?” to “how do we do it reliably?” The consistent theme across today’s news cycle is that production-grade AI agents require sophisticated engineering practices—harness design, context management, security hardening, and verification frameworks. Organizations that master these disciplines will successfully deploy capable AI agents; those that skip these steps will face failures, security issues, and user distrust. The future of AI isn’t just about better models; it’s about better engineering practices that make those models reliably useful in the real world.

What stories are you following in the AI agent space? Share your thoughts in the comments below.