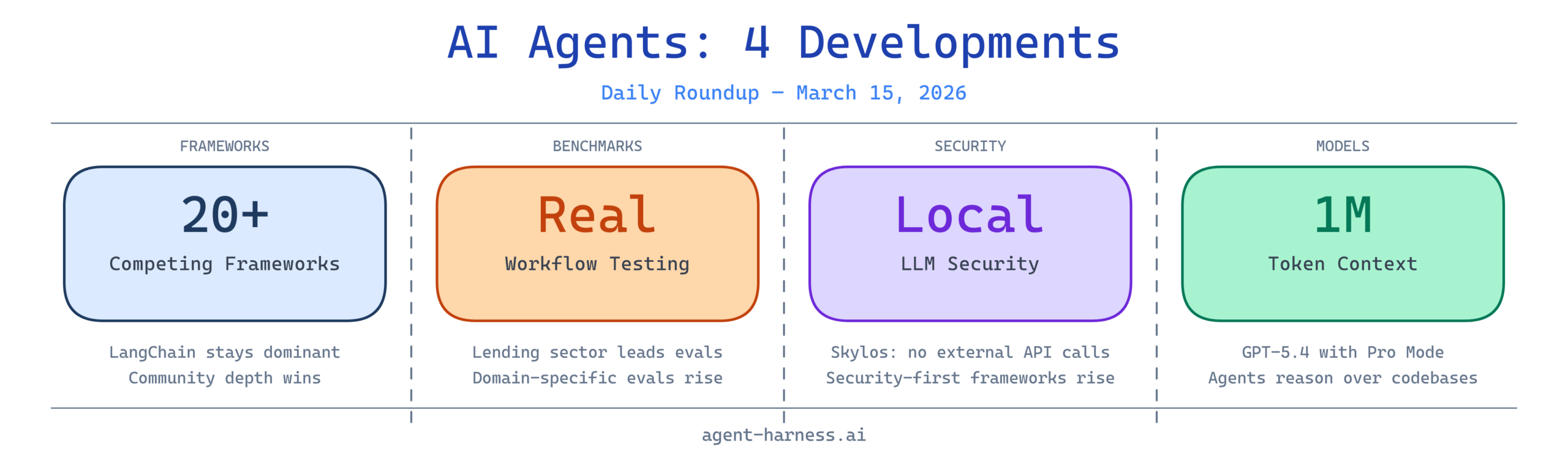

The AI agent ecosystem continues its rapid expansion, with major developments emerging across frameworks, benchmarking methodologies, security approaches, and model capabilities. Today’s roundup covers critical insights into how enterprises are evaluating agent performance, the proliferation of competing frameworks, and significant advancements in model context windows that are reshaping what AI agents can accomplish.

1. LangChain Maintains Its Central Role in Agent Engineering

LangChain continues to dominate discussions among developers building production AI agents, with its GitHub repository serving as the de facto standard for agent framework implementation. The project’s comprehensive tooling and extensive community support have made it the benchmark against which other frameworks are measured.

Analysis: LangChain’s prominence underscores a critical insight about the AI agent landscape—standardization around core frameworks reduces development friction and accelerates adoption. As enterprises evaluate agent infrastructure, LangChain’s maturity, ecosystem integration, and documentation provide confidence for production deployments. The framework’s dominance suggests that while competition is healthy, interoperability and established patterns matter more than novelty in agent engineering.

2. AI Agents Benchmarked Against Real Lending Workflows

A significant new case study demonstrates how AI agents perform when applied to real-world financial lending operations, moving beyond theoretical benchmarks to practical, revenue-impacting scenarios. The benchmarking includes accuracy metrics, processing speed, and cost efficiency across various lending decision types.

Analysis: As AI agents transition from experimental tools to production systems in financial services, empirical performance data becomes essential for risk management and ROI justification. This benchmarking work addresses a critical gap—many enterprises deploy agents without rigorous evaluation against their specific workflows, leading to inconsistent results. The shift toward domain-specific benchmarking suggests that one-size-fits-all agent frameworks are giving way to specialized implementations tuned for industry requirements. For lending institutions, such benchmarks provide the evidence needed to confidently allocate capital to AI agent automation projects.

3. Skylos Brings Security-First AI Agent Development

Skylos introduces a novel approach to AI agent security by combining static analysis with local LLM agents, addressing growing concerns about deploying AI agents in security-sensitive environments. The framework emphasizes local processing and code analysis without relying on external APIs, reducing attack surface and data exposure.

Analysis: As AI agents gain access to critical systems—databases, financial networks, and proprietary data—security considerations move from “nice-to-have” to mandatory. Skylos represents an emerging category of security-first agent frameworks that recognize the inherent risks of LLM-powered automation. The emphasis on local LLM agents and static analysis suggests a growing segment of enterprises willing to run inference locally for enhanced security. This trend reflects a maturation of the AI agent market, where early-stage deployment enthusiasm is being tempered by realistic assessments of risk and compliance requirements.

4. 2026 Agent Framework Showdown: 20+ Frameworks Compared

The AI agent framework landscape has exploded with options, and a comprehensive comparison of LangChain, LangGraph, CrewAI, AutoGen, Mastra, DeerFlow, and 20+ additional frameworks provides developers with detailed analysis of trade-offs, strengths, and optimal use cases. This comparison addresses a fundamental challenge facing teams starting agent projects: framework selection.

Analysis: The rapid proliferation of agent frameworks indicates both market opportunity and uncertainty about optimal architectural patterns. While competition drives innovation, framework fragmentation creates selection paralysis for enterprises. This comprehensive comparison serves as a critical reference point, helping development teams evaluate frameworks against their specific requirements—whether they need multi-agent orchestration, specialized domain adapters, or minimal overhead implementations. The abundance of options suggests that no single framework dominates all use cases, validating the strategy of some organizations to evaluate and select based on specific performance characteristics rather than market hype.

5. Understanding Deep Agents vs. Basic LLM Workflows

A detailed exploration of what separates advanced, production-grade AI agents from simple LLM prompt chains clarifies often-misunderstood concepts in the industry. The video breaks down architectural patterns, reliability mechanisms, and cognitive capabilities that define “deep agents.”

Analysis: The distinction between basic prompt orchestration and true agent systems is often blurred in marketing materials and industry discussions. This breakdown is valuable for architects and technical leaders evaluating whether their use case requires sophisticated agent infrastructure or whether simpler solutions will suffice. Understanding this spectrum prevents over-engineering simple automation tasks while ensuring critical applications receive appropriate architectural rigor. As AI agents mature, this clarity becomes increasingly important—not every task requires a multi-tool, reasoning-capable agent system, and misalignment between requirements and implementation drives both failed projects and unnecessary complexity.

6. Five Major AI Updates This Week

This week’s AI updates encompass multiple significant developments in AI capabilities, tools, and infrastructure that directly impact agent development and deployment. The roundup covers innovations across model capabilities, framework improvements, and integration tools.

Analysis: The velocity of AI development continues to accelerate, with weekly updates now including substantial improvements rather than incremental changes. For teams building on AI agent foundations, this rapid iteration creates both opportunities and challenges—new capabilities enable more sophisticated agents, but constant updates create pressure to continuously re-evaluate architectural decisions. The challenge for practitioners is balancing adoption of new capabilities with the stability required for production systems.

7. OpenAI Releases GPT-5.4 with 1 Million Token Context Window

OpenAI’s launch of GPT-5.4 with expanded context window capacity to 1 million tokens represents a watershed moment for AI agent capabilities, fundamentally changing what agents can accomplish in single reasoning passes. The Pro Mode enhancement adds sophisticated reasoning capabilities that benefit complex agent workflows.

Analysis: Context window expansion directly addresses a persistent limitation of AI agents—the ability to maintain complex state and process large information volumes without decomposition. With 1 million tokens, agents can ingest entire codebases, comprehensive documentation, or extensive conversation histories without summarization or chunking. This capability shift enables new agent architectures: agents can now reason over complete project context rather than working with abstracts or summaries. The Pro Mode addition suggests OpenAI is moving toward tiered capabilities specifically designed for complex reasoning tasks that agent systems rely upon. For enterprises building production agents, this update likely reduces the engineering required to implement sophisticated multi-step workflows.

8. OpenAI’s Weekly AI Update Summary

Additional coverage of this week’s AI announcements provides alternative perspectives on how recent developments—particularly GPT-5.4’s capabilities—will reshape the agent landscape and influence framework evolution. The expanded analysis examines ripple effects across the AI ecosystem.

Analysis: The convergence of multiple coverage sources on GPT-5.4’s significance validates its importance as an inflection point for the industry. The focus on expanded context windows and reasoning improvements indicates that the fundamental bottleneck for agent systems is shifting from token processing speed to capability depth and context handling. Framework developers will likely rapidly incorporate these new capabilities, leading to another wave of architectural updates. Teams that have built agent systems on earlier model generations may find their architectural constraints no longer apply, enabling simplification and improved performance.

Looking Forward

The March 15 news cycle reveals several critical trends shaping the AI agent industry:

Framework consolidation is happening gradually. While new frameworks continue to emerge, established players like LangChain maintain dominance through ecosystem depth and community maturity. Organizations should evaluate frameworks not just on technical merit but on long-term sustainability and community support.

Production benchmarking is becoming standard practice. The move toward domain-specific performance evaluation—exemplified by lending workflow benchmarks—reflects industry maturation. Enterprises no longer accept generic benchmarks; they demand evidence of performance in their specific contexts.

Security considerations are rising in priority. The emergence of security-focused frameworks like Skylos indicates that early-stage deployment enthusiasm is maturing into serious risk assessment. Organizations handling sensitive data are actively seeking frameworks that address security as a first-class concern.

Model capabilities are accelerating faster than frameworks can adapt. With GPT-5.4’s substantial improvements in context handling and reasoning, framework architectures built around earlier generation limitations may require reassessment. Teams should plan for rapid evolution of underlying model capabilities.

For development teams and technology leaders, today’s news reinforces several priorities: invest in frameworks with sustained community support, benchmark performance in production-like conditions, explicitly address security requirements, and prepare for rapid iteration as underlying model capabilities continue advancing. The AI agent field is moving from experimentation toward production operation, and the winners will be organizations that combine technical sophistication with pragmatic risk management.

What developments are you most excited about in this week’s AI agent news? Share your thoughts and follow Agent Harness for daily coverage of the evolving AI agent ecosystem.