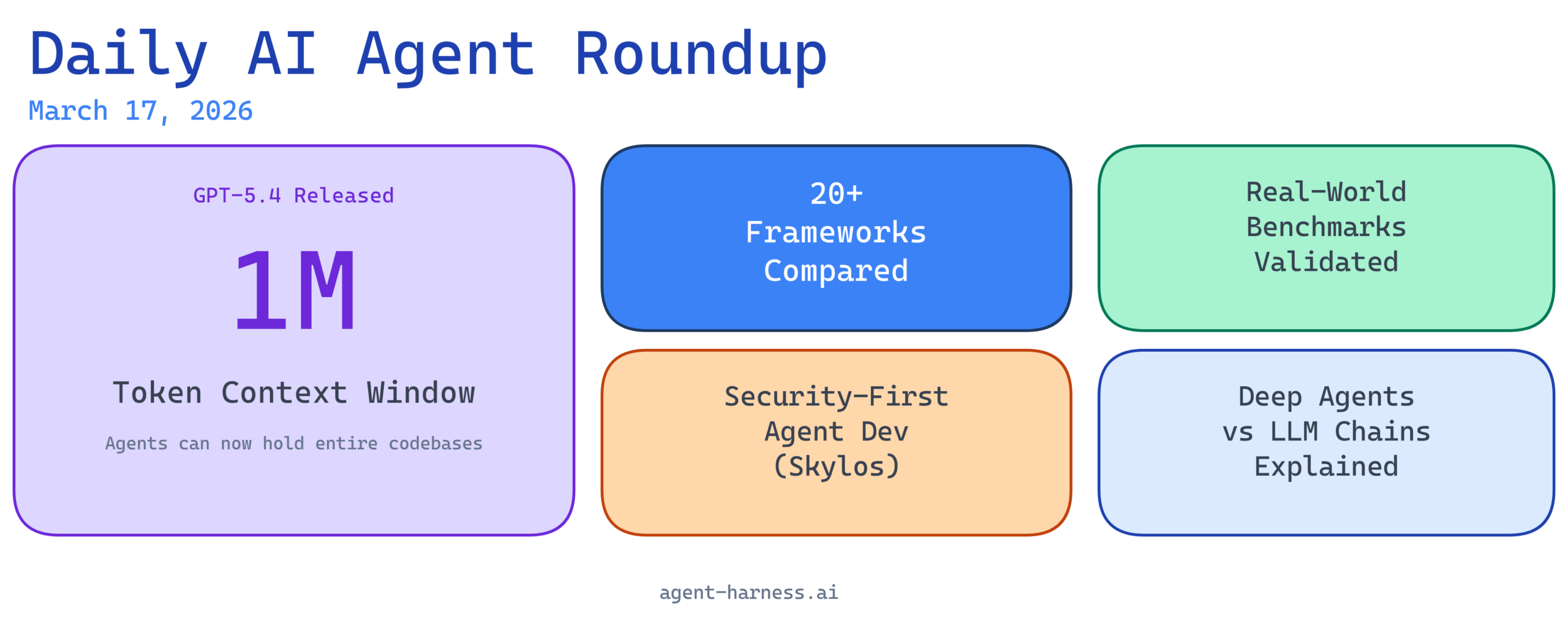

The AI agent landscape continues to accelerate with significant developments this week spanning framework maturity, real-world performance validation, security innovation, and breakthrough model capabilities. Whether you’re building production agents, evaluating frameworks, or staying ahead of emerging trends, today’s roundup covers the key developments reshaping how organizations deploy and secure AI agents at scale.

1. LangChain Remains Central to Agent Engineering Landscape

Source: GitHub – langchain-ai/langchain

LangChain’s continued prominence in the AI agent engineering ecosystem reflects its evolution from a simple orchestration library into a comprehensive platform for building production-grade agents. The framework’s maturity, extensive ecosystem, and community contributions make it a reference point for developers evaluating how to architect agent workflows. With constant updates to support new models and agent patterns, LangChain demonstrates why it remains essential for anyone building beyond simple prompt-response applications.

2. Real-World Lending Agents Show Strong Performance in Benchmarking Study

Source: Reddit – Benchmarked AI agents on real lending workflows

A significant case study benchmarking AI agents against real lending workflows demonstrates that these systems are moving beyond experimentation into validated, production-ready territory. The research provides critical performance metrics that financial institutions need to understand before deploying agents in high-stakes environments. This validation is particularly important as regulated industries seek concrete evidence that AI agents can handle complex decision-making tasks with consistency and reliability.

3. Skylos Brings Security-First Approach to AI Agent Development

Source: GitHub – duriantaco/skylos

As AI agents become increasingly powerful and autonomous, security concerns grow proportionally—and Skylos addresses this head-on with a novel approach combining static analysis and local LLM agents. This tool tackles one of the industry’s critical blind spots: how to develop agents that are both capable and secure without external dependencies. By enabling local LLM analysis without cloud infrastructure, Skylos opens pathways for organizations that need to maintain strict data governance while leveraging advanced agent capabilities.

4. Comprehensive Framework Comparison: 20+ AI Agent Platforms Evaluated for 2026

Source: Reddit – Comprehensive comparison of every AI agent framework in 2026

The proliferation of AI agent frameworks—spanning LangChain, LangGraph, CrewAI, AutoGen, Mastra, DeerFlow, and 20+ others—creates both opportunity and decision fatigue for engineering teams. A thorough comparative analysis breaking down tradeoffs in architecture, scalability, model flexibility, and deployment patterns provides essential context for teams standing at this crossroads. With each framework optimizing for different use cases, this analysis helps organizations align framework selection with their specific requirements rather than defaulting to whatever gained mindshare first.

5. Understanding Deep Agents vs. Basic LLM Workflows

Source: YouTube – The Rise of the Deep Agent: What’s Inside Your Coding Agent

There’s a critical distinction emerging between simple LLM chains and true autonomous agents, and this technical deep-dive explores what separates reliable, production-grade agents from workflows that appear intelligent but lack the reasoning depth for complex tasks. Coding agents represent a perfect case study for understanding where basic LLM interactions fail and why sophisticated agent architectures with planning, reflection, and error recovery become essential. For teams building AI coding tools or considering agent-based automation, this distinction between shallow and deep agent approaches has direct implications for reliability and user trust.

6. OpenAI’s GPT-5.4 Launch: 1 Million Token Context Window Redefines Agent Possibilities

Source: YouTube – OpenAI Drops GPT 5.4 – 1 Million Tokens + Pro Mode

OpenAI’s release of GPT-5.4 with its expanded 1 million token context window represents a fundamental shift in what agent architectures can accomplish. The leap from previous context limits to 1 million tokens means agents can now maintain multi-hour conversation histories, process entire codebases, or handle complex multi-step workflows without lossy context compression. Additionally, Pro Mode represents OpenAI’s clearest signal yet that they’re building infrastructure explicitly designed for enterprise-grade agent deployment rather than consumer chat applications.

7. Weekly AI Updates: What Changed in the Agent Development Landscape This Week

Source: YouTube – 5 Crazy AI Updates This Week

This week’s broader AI developments extend beyond any single framework or model to encompass systemic changes across the entire agent development ecosystem. The combination of GPT-5.4’s context expansion, security-focused tools like Skylos, and real-world benchmarking data suggests the industry is moving past the “AI agents as experiment” phase into “AI agents as essential infrastructure.” When viewed together, these updates paint a picture of an ecosystem rapidly maturing from proof-of-concept to production reliability.

Key Takeaways

The AI agent landscape is consolidating around proven patterns while simultaneously diversifying in specialization. LangChain’s continued centrality doesn’t prevent the emergence of specialized alternatives that excel in specific domains—whether security-first (Skylos), multi-agent coordination (CrewAI), or graph-based orchestration (LangGraph).

Real-world validation is replacing pure hype. The lending workflows benchmarking study exemplifies a broader trend: organizations are moving from “can we use AI agents?” to “how do we deploy AI agents responsibly?” This shift toward performance metrics and comparative analysis benefits the entire ecosystem by establishing baseline expectations for reliability.

Model capabilities now enable architectures that were theoretical months ago. A 1 million token context window fundamentally changes how agents manage state, maintain memory, and handle complex reasoning tasks. Teams architecting new systems today should factor this context expansion into their long-term design decisions.

Security is becoming table stakes, not an afterthought. The emergence of tools like Skylos reflects growing organizational awareness that autonomous agents operating with real capabilities require security architecture from the ground up, not bolted on afterward.

For teams building, evaluating, or deploying AI agents, this week underscores why staying current matters: the foundational decisions you make today about frameworks, architectures, and security approaches directly impact your ability to leverage advances in model capability, benchmarking knowledge, and tooling maturity.

What developments are you tracking in the AI agent space? Follow agent-harness.ai for daily coverage of the frameworks, tools, and breakthroughs shaping autonomous AI systems.