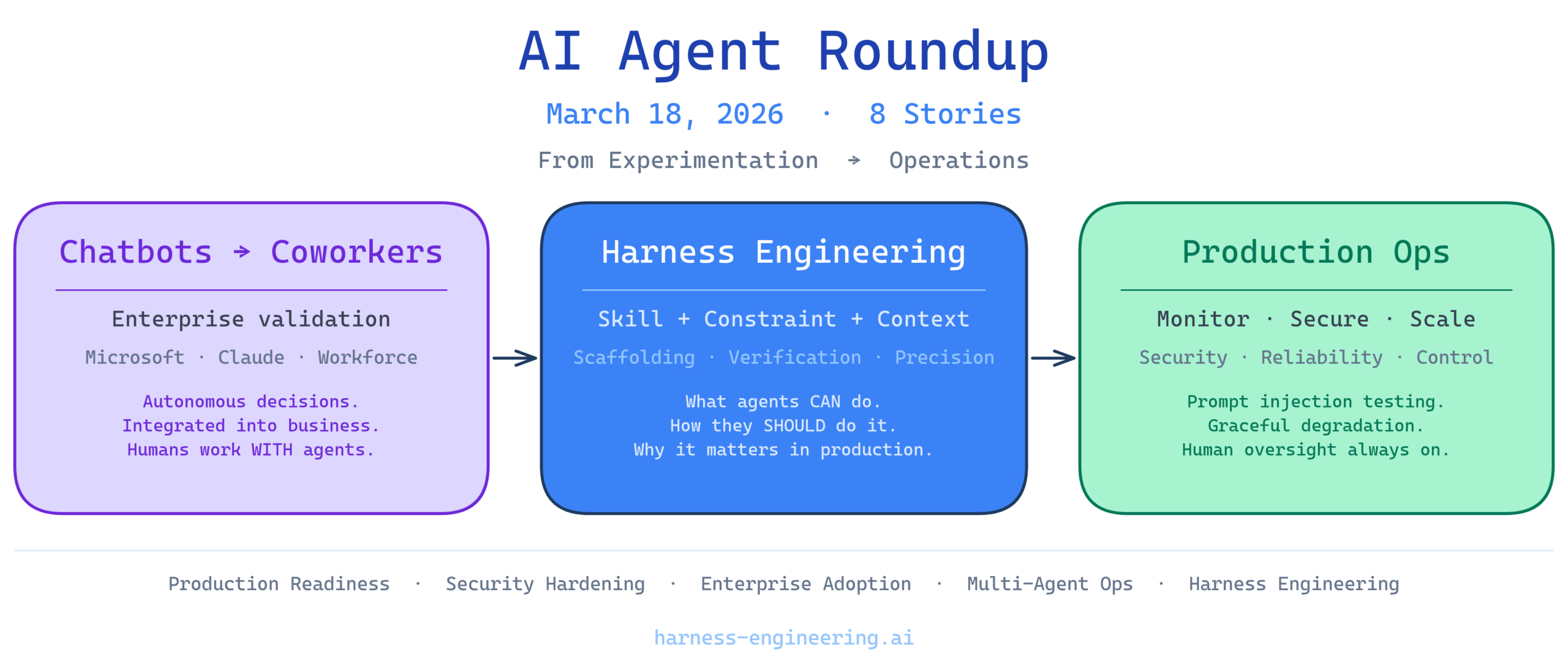

The AI agent landscape continues to mature at a rapid pace. From production deployment lessons to security hardening strategies, today’s coverage reflects the growing sophistication of both AI agent engineering and the organizational practices needed to deploy them responsibly. Major technology announcements underscore the industry’s pivot from experimental chatbots to mission-critical workforce automation, while tactical discussions reveal the practical challenges engineers face when scaling agent systems. Here’s what you need to know today.

1. Lessons From Building and Deploying AI Agents to Production

Drawing from real-world experiences, this content highlights key lessons and best practices for successfully deploying AI agents in production environments. As organizations move beyond proof-of-concepts, understanding what actually works at scale becomes essential for teams building agent-powered systems.

Why it matters: Production deployment is where many AI agent projects stumble. The gap between a working prototype and a reliable production system requires deep understanding of monitoring, error handling, and graceful degradation. Teams that learn from others’ production experiences can avoid costly mistakes and implement proven patterns from day one. This focus on practical production lessons represents a maturation of the field—moving from theoretical capabilities toward operational resilience.

2. Test Your AI Agents Like a Hacker – Automated Prompt Injection Attacks

As AI agents become more prevalent, understanding vulnerabilities like prompt injection attacks is crucial for maintaining security and trust. Automated testing frameworks that simulate adversarial attacks help teams identify and patch security weaknesses before they reach production.

Why it matters: Prompt injection represents one of the most critical security vectors for AI agents. Unlike traditional software vulnerabilities, these attacks exploit the model’s natural language understanding itself, making them particularly insidious. Organizations deploying agents to handle sensitive tasks—financial operations, personnel decisions, or data access—must implement rigorous security testing. This conversation reflects the industry’s growing recognition that robust security isn’t optional; it’s foundational to responsible AI deployment.

3. AI Agents Just Went From Chatbots to Coworkers

Recent announcements from major tech companies highlight the transition of AI agents from simple chatbots to integral parts of the workforce. This shift signals a fundamental change in AI deployment strategies, moving from customer-facing interfaces to internal operations and deep integration with existing business systems.

Why it matters: The coworker designation carries significant implications. It suggests AI agents are now trusted with autonomous decision-making, access to sensitive systems, and responsibility for business-critical outcomes. This isn’t a marginal capability improvement—it’s a categorical shift in how organizations view AI agent utility and risk. The industry is moving toward scenarios where humans work with AI agents rather than asking AI agents for help, fundamentally changing requirements for reliability, auditability, and control.

4. How I Eliminated Context-Switch Fatigue When Working With Multiple AI Agents in Parallel

As AI agents become more prevalent, managing multiple instances efficiently is crucial. This topic addresses the challenges and solutions for parallel AI agent operations, including orchestration strategies and context preservation across concurrent executions.

Why it matters: Context-switch fatigue represents a real operational burden for teams managing multiple agents. Developers and operators who’ve found effective solutions for state management, attention prioritization, and seamless handoffs between agents are unlocking significant productivity gains. The practical strategies shared in this conversation directly address a pain point that grows more acute as organizations deploy 5, 10, or 50 agents simultaneously. Smart orchestration and context engineering directly impact whether parallel agents become a multiplier for human productivity or a source of coordination overhead.

5. Microsoft Just Launched an AI That Does Your Office Work for You — and It’s Built on Anthropic’s Claude

Microsoft’s launch of Copilot Cowork highlights the growing trend of integrating AI agents into everyday office tasks. This represents a significant validation of Claude’s capabilities in enterprise environments and demonstrates the importance of harness engineering in streamlining workflows.

Why it matters: When enterprises like Microsoft integrate Claude-powered agents into their productivity suite, it signals that AI agents have graduated from experimental tools to production-ready infrastructure. The choice to build on Anthropic’s Claude reflects confidence in the model’s ability to handle the nuance, accuracy, and safety requirements of office automation. For organizations considering AI agent adoption, this milestone matters: it demonstrates that enterprises with the most at stake are placing production bets on agent architectures. It also underscores how critical harness engineering becomes when agents handle real business processes—from email composition to meeting scheduling to data analysis.

6. Building AI Coding Agents for the Terminal: Scaffolding, Harness, Context Engineering

This content explores the integration of harness and context engineering in AI coding agents designed for terminal environments. It addresses how developers can build agents that understand complex system contexts and execute sophisticated programming tasks autonomously.

Why it matters: Coding agents represent a particularly demanding use case for harness engineering. Unlike general-purpose agents, coding agents must maintain deep context about codebases, toolchains, and execution environments while making decisions that affect production systems. The emphasis on scaffolding—providing agents with structured frameworks for thinking and execution—and context engineering reveals how sophistication in agent design directly enables more capable autonomous coding. As developers increasingly delegate debugging, refactoring, and feature implementation to agents, the engineering that constrains and guides those agents becomes a competitive advantage.

7. Harness Engineering: Supervising AI Through Precision and Verification

With increasing complexity in AI systems, precision and verification are becoming essential in harness engineering to ensure reliable AI outputs. This topic delves into the methodologies for supervising AI effectively, including verification frameworks, quality gates, and continuous monitoring.

Why it matters: Supervision and verification represent the critical distinction between experimental AI and trustworthy AI. As agents take on higher-stakes responsibilities, the ability to verify their outputs, constrain their behavior through precise instructions, and maintain human oversight becomes non-negotiable. The focus on precision—ensuring agents understand exactly what’s expected—reflects industry recognition that vague instructions and broad autonomy create risk. Organizations deploying agents to production must invest in harness engineering that enables humans to understand, predict, and override agent behavior when necessary.

8. AI Agents: Skill & Harness Engineering Secrets REVEALED!

Understanding the interplay between skill and harness engineering is crucial for advancing AI agents and unlocking their full potential. This content explores how skills—specific capabilities agents possess—and harness—the frameworks that guide and constrain their use—work together to enable more capable, reliable autonomous systems.

Why it matters: The distinction between skill engineering and harness engineering often gets blurred, but they’re complementary disciplines. Skills represent what agents can do; harness represents how agents should do it. The recognition that both matter equally reflects maturity in the field. Teams that excel at building AI agents distinguish themselves not just through raw capability but through the careful architecture that makes agents reliable, predictable, and safe. This interplay is where the real competitive advantage lies.

The Takeaway: From Experimentation to Operations

March 2026 marks an inflection point in AI agent maturity. The industry is past the “can we build agents?” phase and firmly in the “how do we deploy agents responsibly at scale?” phase.

Today’s news reflects this transition across multiple dimensions:

- Production readiness is now table stakes, not a nice-to-have

- Security hardening must begin early and continue throughout the agent lifecycle

- Enterprise adoption is accelerating, validating agent architectures in real business contexts

- Operational practices for multi-agent systems are emerging from practitioner experience

- Harness engineering has moved from a specialized concern to a core engineering discipline

For teams building or deploying AI agents, the lesson is clear: success depends not just on model capability but on the careful orchestration, verification, and governance that harness engineering provides. The agents that thrive in production are those built with as much attention to operational resilience and human oversight as they are to raw functionality.

The future of AI isn’t about smarter models—it’s about smarter, more careful deployment.

Daily AI Agent News Roundup publishes weekdays at harness-engineering.ai