The AI agent landscape continues to evolve at a breakneck pace. This week brings significant developments across multiple fronts: frameworks are maturing, real-world applications are being benchmarked, security concerns are being addressed, and model capabilities are expanding. Whether you’re building agents in production or evaluating frameworks, this roundup covers the most important stories shaping the industry right now.

1. LangChain Reinforces Its Position as the Industry Standard

LangChain’s GitHub repository continues to be a central hub for agent engineering, with ongoing development and community contributions. As the most widely adopted framework for building language model applications, LangChain’s prominence underscores its importance in the evolving landscape of AI agent development. The framework’s flexibility in supporting multiple LLM providers, retrieval mechanisms, and tool integration patterns has made it the de facto standard for developers building production agents.

The steady stream of updates and community engagement demonstrates that LangChain remains essential infrastructure for the agent ecosystem. Whether teams are just getting started with agents or refining sophisticated multi-step workflows, LangChain provides the foundational abstractions needed to move fast without sacrificing reliability.

2. Real-World Benchmarking: AI Agents in Lending Workflows

A recent Reddit discussion exploring AI agents benchmarked on real lending workflows provides valuable empirical data on agent performance in high-stakes financial applications. As AI agents are increasingly deployed in financial services—where accuracy and compliance are non-negotiable—benchmarking their performance on real lending workflows is essential for understanding their effectiveness and limitations.

This case study reveals both the promise and the pitfalls of deploying agents in regulated industries. The results highlight that agents can handle complex, multi-step processes like loan evaluation and risk assessment, but only when properly trained on domain-specific data and given appropriate guardrails. For financial institutions evaluating agent adoption, this benchmark provides crucial insights into expected performance, failure modes, and implementation considerations.

3. Skylos: Secure AI Agent Development with Static Analysis

Skylos on GitHub presents a novel approach to AI agent security by combining static analysis with local LLM agents. With increasing concerns over AI security—including prompt injection, data leakage, and uncontrolled tool execution—Skylos addresses a critical gap in the agent development toolkit. Rather than relying solely on runtime safeguards, the framework performs static analysis to identify potential vulnerabilities before agents run in production.

This tool is particularly relevant as agents gain access to more powerful tools and external systems. By catching security issues early and providing local LLM-based analysis (avoiding third-party API dependencies), Skylos makes it feasible for teams to implement security best practices without adding significant development overhead. For security-conscious teams building production agents, this represents a crucial addition to the agent engineering stack.

4. The 2026 AI Agent Framework Landscape: A Comprehensive Breakdown

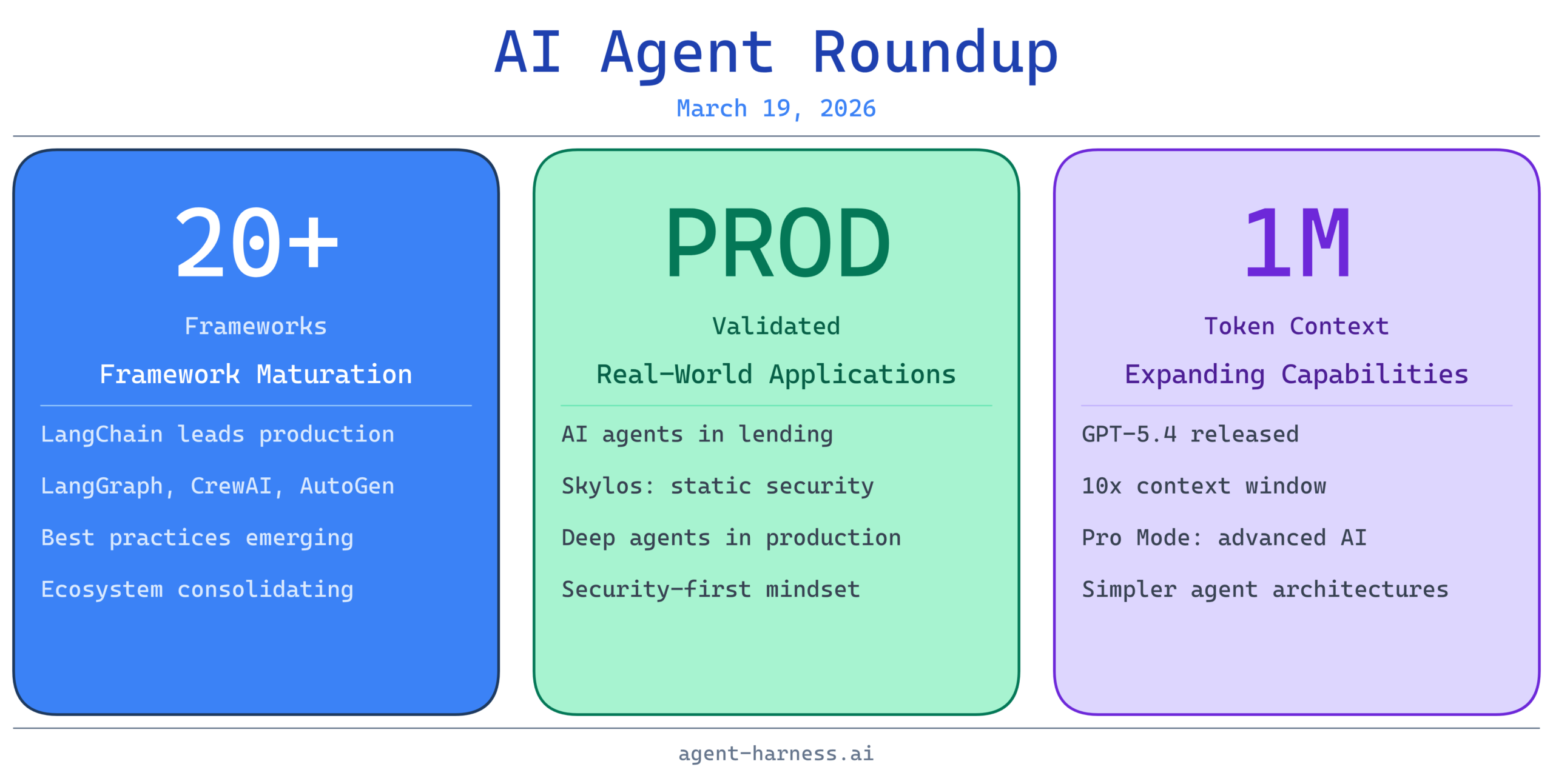

A comprehensive Reddit comparison of AI agent frameworks in 2026 maps the rapidly expanding ecosystem of agent-building platforms. The comparison covers LangChain, LangGraph, CrewAI, AutoGen, Mastra, DeerFlow, and 20+ additional frameworks—reflecting the explosive growth in agent tooling. With the rapid evolution of AI agent frameworks, understanding the tradeoffs between popular options is invaluable for teams making architectural decisions.

The key insight from this analysis: there is no single “best” framework. Instead, different frameworks excel in different contexts. LangChain remains the flexibility champion for custom workflows. LangGraph specializes in complex graph-based agent architectures. CrewAI emphasizes multi-agent collaboration. AutoGen focuses on autonomous research. Teams need to evaluate frameworks based on their specific requirements: Do you need maximum flexibility or opinionated structure? Single agents or multi-agent systems? Complex reasoning or straightforward task execution? This landscape diversity is healthy for the ecosystem, but it also requires careful evaluation during architecture selection.

5. Understanding Deep Agents: Beyond Basic LLM Workflows

The Rise of the Deep Agent: What’s Inside Your Coding Agent explores the distinction between simple LLM workflows and sophisticated, production-grade AI agents. As AI coding tools rapidly evolve, many developers conflate “using an LLM to write code” with “having a true AI coding agent.” Deep agents represent a significant step forward—they combine planning, tool use, error handling, and iterative refinement to reliably complete complex tasks.

Understanding this distinction matters because it shapes expectations. A simple LLM prompt might generate code in a single pass, but it will fail on novel problems, miss edge cases, and require human review. Deep agents, by contrast, can reason about failures, adjust their approach, validate their output, and iterate toward correct solutions. For teams adopting AI-powered coding tools, this perspective helps clarify where automation can provide real value versus where human oversight remains essential.

6. The Week in AI Model Releases: GPT-5.4 and Expanding Context

5 Crazy AI Updates This Week covers OpenAI’s major releases and other significant model announcements shaping the agent landscape. The standout development: OpenAI’s release of GPT 5.4 with a 1-million-token context window, along with a new Pro Mode tier. An expanded context window fundamentally changes what agents can accomplish—longer prompts, larger documents, deeper reasoning over more context.

For agent developers, this is transformative. Agents that previously needed to break tasks into smaller chunks can now operate on complete documents, codebases, and knowledge bases in a single pass. The Pro Mode tier with enhanced capabilities further accelerates the arms race in agent sophistication. These updates will influence how current agent frameworks optimize their prompting strategies and context management, making it essential for developers to understand how to leverage the new capabilities effectively.

7. OpenAI’s GPT-5.4 Announcement: 1 Million Tokens and Pro Mode

OpenAI Drops GPT-5.4 – 1 Million Tokens + Pro Mode! and 5 Crazy AI Updates This Week! provide detailed coverage of OpenAI’s latest release. The 1-million-token context window represents a 10x increase from previous GPT models, fundamentally reshaping what’s possible with agentic workflows. Pro Mode offers additional inference enhancements, suggesting OpenAI is betting heavily on advanced reasoning and multi-step task completion.

The practical implications are significant. Agents can now maintain longer conversation histories without pruning, process entire codebases or documentation sets without summarization, and handle more complex reasoning chains before token limits become a constraint. This is particularly game-changing for research agents, code analysis agents, and document processing workflows. Teams currently managing token budgets carefully may find themselves able to simplify their agent architectures, removing complex chunking and summarization logic.

The Bottom Line

This week’s developments underscore three major trends shaping AI agents in 2026:

Maturation: Frameworks are stabilizing, best practices are emerging, and the ecosystem is consolidating around a core set of tools while remaining diverse enough for specialized use cases.

Real-World Validation: Agents are moving beyond demos into production, with measurable performance data in domains like lending. This grounding in reality is essential for the industry’s credibility.

Expanding Capabilities: Model improvements—especially expanded context windows—are removing previous constraints, allowing simpler, more elegant agent architectures that previously required complex workarounds.

For builders, the message is clear: the infrastructure for production agents exists. The remaining challenges are architectural (designing effective workflows), operational (integrating with existing systems), and governance (ensuring safety and compliance). Teams evaluating or building agents should focus on understanding available frameworks, learning from real-world benchmarks, and planning for security from day one.

The agent revolution isn’t coming—it’s here. The question now is execution.