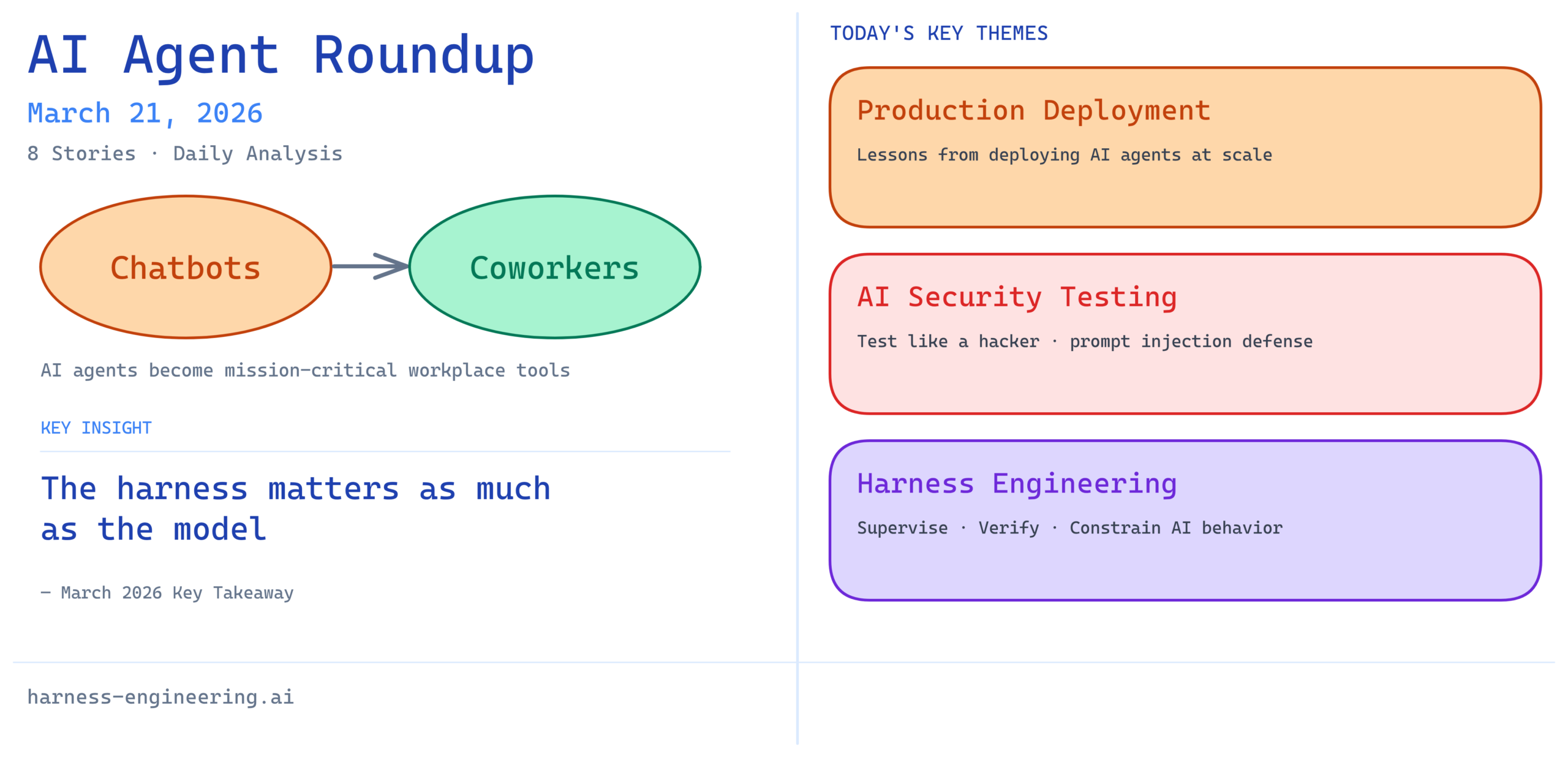

The AI agent landscape continues to shift dramatically as these systems move from experimental chatbots to mission-critical workplace tools. Today’s roundup highlights the critical intersection of production deployment, security considerations, and the emerging discipline of harness engineering that’s essential for responsible AI agent development and deployment.

1. Lessons From Building and Deploying AI Agents to Production

Real-world experiences from teams deploying AI agents at scale are revealing hard-won lessons that go well beyond initial prototypes. This resource draws from practical deployments to surface the patterns, pitfalls, and best practices that determine whether AI agents succeed or fail in production environments. Understanding these lessons early can save teams months of debugging and rework.

Analysis: As AI agents transition from research projects to operational systems, the gap between “works in development” and “works reliably at scale” becomes increasingly apparent. Organizations need to learn from early deployments to avoid costly mistakes with error handling, monitoring, and graceful degradation in their own systems.

2. Test Your AI Agents Like a Hacker — Automated Prompt Injection Attacks

Security vulnerabilities in AI agents are emerging as a critical concern, with prompt injection attacks representing one of the most insidious threats. This exploration of automated attack vectors shows how malicious inputs can bypass safety measures and cause agents to behave in unintended ways. Testing agents defensively—simulating how attackers might compromise them—is becoming a non-negotiable part of the deployment checklist.

Analysis: As AI agents gain access to more sensitive operations and real-world systems, the security implications multiply exponentially. Teams building agents for production must adopt adversarial testing practices to identify vulnerabilities before bad actors do. This represents a fundamental shift in how organizations approach AI safety and agent verification.

3. AI Agents Just Went From Chatbots to Coworkers

Recent announcements from major technology companies signal a seismic shift in AI deployment strategy—agents are moving from being conversational tools to becoming integrated members of the workforce. This transition from chatbot-like interactions to autonomous agents that handle complex, multi-step tasks reflects maturation in both the underlying models and the harness engineering frameworks supporting them. The distinction matters: coworkers have responsibilities, autonomy, and expected outcomes that far exceed simple Q&A systems.

Analysis: This evolution underscores the importance of robust harness engineering practices. When AI agents become workplace tools that other humans depend on, the infrastructure for managing, monitoring, and correcting them becomes as important as the agent’s capabilities themselves. Organizations investing in harness engineering now will have competitive advantages as this transition accelerates.

4. How I Eliminated Context-Switch Fatigue When Working With Multiple AI Agents in Parallel

Managing multiple AI agents operating simultaneously presents a novel challenge: context switching overhead when monitoring and coordinating between different agents, each with its own state, goals, and operational requirements. This practical discussion reveals solutions for organizing agent orchestration, standardizing communication protocols, and creating unified oversight systems that reduce cognitive load. The tactics shared address both technical and human factors in parallel agent management.

Analysis: This topic highlights an often-overlooked aspect of agent deployment—the human experience of working alongside multiple agents. As workplaces deploy more AI agents concurrently, the ability to coordinate and oversee them without exhausting human workers becomes crucial. Smart orchestration patterns and clear interfaces reduce friction and enable more effective human-agent collaboration.

5. Microsoft Just Launched an AI That Does Your Office Work for You — and It’s Built on Anthropic’s Claude

Microsoft’s launch of Copilot Cowork represents a watershed moment in AI agent deployment, bringing capable agents directly into the everyday office environment. Built on Anthropic’s Claude model, this system demonstrates how enterprise AI agents are being harnessed to automate routine office work across productivity applications. The partnership between Microsoft and Anthropic signals confidence in Claude’s reliability and safety profile for real-world, high-stakes applications.

Analysis: When major technology companies deploy AI agents into production at enterprise scale, it validates that the field has matured beyond experimentation. This development highlights the competitive advantage of robust harness engineering—systems that only work when properly managed, verified, and constrained tend to fail in the market. Microsoft’s choice of Claude suggests that Anthropic’s approach to AI safety and controllability is resonating with organizations deploying agents at scale.

6. Building AI Coding Agents for the Terminal: Scaffolding, Harness, Context Engineering

AI coding agents represent some of the most complex agent applications, requiring deep technical understanding, error recovery, and context management across large codebases. This deep dive explores the foundational frameworks—scaffolding for code generation, harness engineering for constraining agent actions, and context engineering for ensuring agents operate with sufficient information. These elements work together to create coding agents that are more reliable, safer, and better integrated with developer workflows.

Analysis: Terminal and IDE environments are unforgiving—mistakes in code generation or agent actions can break builds, corrupt repositories, or introduce security vulnerabilities. The emphasis on harness and context engineering in this space reflects mature thinking about how to deploy powerful agents in high-consequence environments. Developers building coding agents should study these patterns closely.

7. Harness Engineering: Supervising AI Through Precision and Verification

As AI agents grow more capable and autonomous, the discipline of harness engineering—the practice of building systems that guide, constrain, and verify AI behavior—becomes increasingly central to responsible deployment. This exploration of precision in agent supervision and verification methodologies addresses the core question: how do we ensure AI agents behave as intended even in novel situations? Effective harness engineering requires thoughtful design of feedback loops, monitoring systems, and intervention mechanisms.

Analysis: Harness engineering is emerging as a critical discipline that organizations deploying AI agents must master. It’s not about making agents less capable—it’s about making them trustworthy and reliable. The best-performing organizations will be those that invest early in building institutional expertise around harness engineering principles.

8. AI Agents: Skill & Harness Engineering Secrets REVEALED!

The intersection of skill engineering—designing agents with the right capabilities and knowledge—and harness engineering creates a multiplier effect on agent performance. This content explores how advances in both dimensions unlock new possibilities for agent deployment. When skill engineering creates capable agents and harness engineering keeps them aligned with human intentions, the combination produces systems that organizations can confidently deploy.

Analysis: The framing of “secrets revealed” reflects how nascent this field still is—many practitioners are discovering these principles independently. As the field matures, these patterns will become standardized practices across organizations, similar to how testing frameworks and deployment automation became standard in software engineering.

Key Takeaway

March 2026 marks a turning point where AI agents transition from interesting research projects to essential workplace infrastructure. The common thread across today’s news is clear: capability alone is no longer sufficient. The organizations and practitioners succeeding with AI agents are those investing in production readiness, security, and harness engineering. The field is moving toward a discipline where the quality of the harness—the systems that guide, supervise, and verify agent behavior—matters as much as the quality of the agent itself. Teams building AI systems should treat harness engineering not as an afterthought but as a first-class engineering discipline worthy of the same rigor applied to core functionality.

For organizations deploying AI agents in production, the lesson is straightforward: invest in understanding production deployment patterns, build robust security testing practices, implement proper agent orchestration, and develop strong harness engineering capabilities. The competitive advantage will go to organizations that master these disciplines, not just those with the most capable base models.