The AI agent landscape continues to evolve at a breakneck pace. From major framework updates to real-world performance benchmarks and significant advances in model capabilities, today brings critical developments for anyone building with or deploying AI agents. Here’s what you need to know.

1. LangChain Remains Central to Agent Engineering

LangChain continues to solidify its position as the foundational framework for modern AI agent development. The project’s persistent dominance in GitHub activity and industry adoption underscores its importance in the rapidly evolving agent engineering ecosystem. As more developers build sophisticated multi-step reasoning systems, LangChain’s composable abstractions and extensive integrations make it an essential component of the modern AI stack.

Analysis: LangChain’s staying power matters because it’s become the lingua franca of agent development—developers learning agents today will likely encounter LangChain in their stack. However, the emergence of competing frameworks (more on this below) suggests the ecosystem is maturing beyond single-framework dominance. Teams choosing their tech stack today should monitor how LangChain evolves to address emerging needs around state management, cost optimization, and observability.

2. AI Agents Benchmarked on Real Lending Workflows

A significant new case study demonstrates AI agents’ practical effectiveness in financial services by benchmarking their performance against real lending workflows. The results provide quantifiable evidence of where agents excel—and where they still struggle—when handling complex financial decision-making tasks. This real-world validation is crucial as financial institutions evaluate whether to deploy AI agents in credit assessment, loan processing, and risk analysis.

Analysis: This is a watershed moment for AI agent adoption in financial services. Rather than theoretical benchmarks, this case study provides evidence that agents can handle nuanced, high-stakes financial workflows—a major hurdle for institutional adoption. The findings likely reveal both compelling use cases and critical gaps (accuracy on edge cases, regulatory compliance, audit trails) that developers will need to address. For fintech companies, this research should inform whether agents are ready for your workflows or if hybrid human-AI systems remain necessary.

3. Skylos: Secure AI Development Through Static Analysis and Local LLMs

As AI agent systems become more complex and deploy into sensitive environments, Skylos introduces a novel security approach by combining static analysis with local LLM-powered agents. The tool addresses growing concerns about AI security by analyzing agent code and behavior at development time rather than relying solely on runtime safeguards. This shift toward proactive, local-first security is increasingly important as agents gain access to databases, APIs, and financial systems.

Analysis: Security has been the shadow concern of AI agent adoption—teams worry about prompt injection, unauthorized API access, and unpredictable agent behavior in production. Skylos’s approach of using local LLMs for security analysis is clever because it avoids sending code to external APIs and keeps analysis within your security perimeter. This tool exemplifies an important trend: as agents move from experimental to production, the security infrastructure around them must mature. Teams deploying agents to production should evaluate whether tools like Skylos fit into their security posture.

4. Comprehensive Comparison of AI Agent Frameworks in 2026

A definitive framework comparison covering LangChain, LangGraph, CrewAI, AutoGen, Mastra, DeerFlow, and 20+ additional frameworks provides developers with the guidance needed to navigate an increasingly crowded landscape. The analysis breaks down each framework’s strengths, weaknesses, and ideal use cases—from simple chatbots to complex multi-agent systems. With over 20 viable frameworks now available, the decision has shifted from “whether to use a framework” to “which framework fits your architecture.”

Analysis: This comparison reveals a maturing ecosystem where different frameworks are optimized for distinct problems rather than all trying to be everything to everyone. LangGraph’s focus on state management, CrewAI’s emphasis on team-based orchestration, and newer entrants like Mastra and DeerFlow’s focus on specific domains show specialization is replacing generalization. For new projects, resist the urge to default to the most popular framework; instead, match your use case to the framework’s strengths. For established teams, this is a good moment to evaluate whether your current framework still fits your evolving needs.

5. Understanding Deep Agents: Beyond Simple LLM Workflows

A recent deep dive explores what distinguishes sophisticated AI agents from basic LLM workflows, examining the internal mechanisms that make coding agents reliable and capable. The video breaks down the architectural patterns, memory systems, and feedback loops that separate production-grade agents from experimental prototypes. As AI coding tools (like Claude, GitHub Copilot, and emerging specialized agents) rapidly evolve, understanding these internals is essential for developers and technical decision-makers.

Analysis: This distinction matters because it shifts the conversation from “can AI agents do X?” to “what makes a reliable agent that can do X repeatedly?” Understanding agent architecture helps teams diagnose why their agents fail, optimize costs, and make informed decisions about where to invest in agent engineering. For engineering leaders evaluating AI tooling, this is the conceptual foundation you need to assess vendor claims about “agentic” capabilities.

6. OpenAI Releases GPT-5.4 with 1 Million Token Context Window

OpenAI’s launch of GPT-5.4 introduces a milestone expansion: a 1 million token context window paired with new Pro Mode capabilities. This dramatic increase in context capacity fundamentally changes what’s possible with AI agents—agents can now reason over entire codebases, multiple documents, conversation histories, and system state simultaneously without truncation or summarization. Pro Mode adds additional capabilities for more complex reasoning tasks, raising the ceiling for what agents can accomplish in a single pass.

Analysis: A 1 million token window is a game-changer for agent capabilities. Agents can now digest an entire codebase during planning, maintain longer conversation histories without summarization, and perform more sophisticated analysis with full context. However, this expansion comes with tradeoffs: longer context means higher latency, increased costs, and the need for smarter retrieval strategies (you don’t want to fill that window with irrelevant information). Teams building coding agents, document analysis systems, and knowledge-intensive applications should begin experimenting with GPT-5.4’s capabilities immediately. Pro Mode may offer advantages for agents handling particularly complex reasoning, though teams should benchmark cost-benefit carefully.

7. Five Crazy AI Updates This Week: Context, Models, and Capabilities

This week’s broader AI update roundup highlights GPT-5.4’s context expansion as part of a larger wave of model and capability improvements across the industry. Alongside context window increases, the updates include refinements to reasoning patterns, improved instruction-following, and new capabilities for agent-based applications. The pace of change reflects intense competition among major AI labs to expand model capabilities and establish market leadership.

Analysis: The convergence of multiple improvements this week—not just from OpenAI but across the industry—indicates we’re in an acceleration phase of AI development. For teams building AI systems, this translates to a recommendation: revisit your capability assumptions quarterly, not annually. A model that wasn’t suitable for a task six months ago may be perfectly capable today. However, this rapid evolution also creates technical debt—systems built on specific model behavior may break or change as underlying models improve and shift their capabilities.

The Bigger Picture

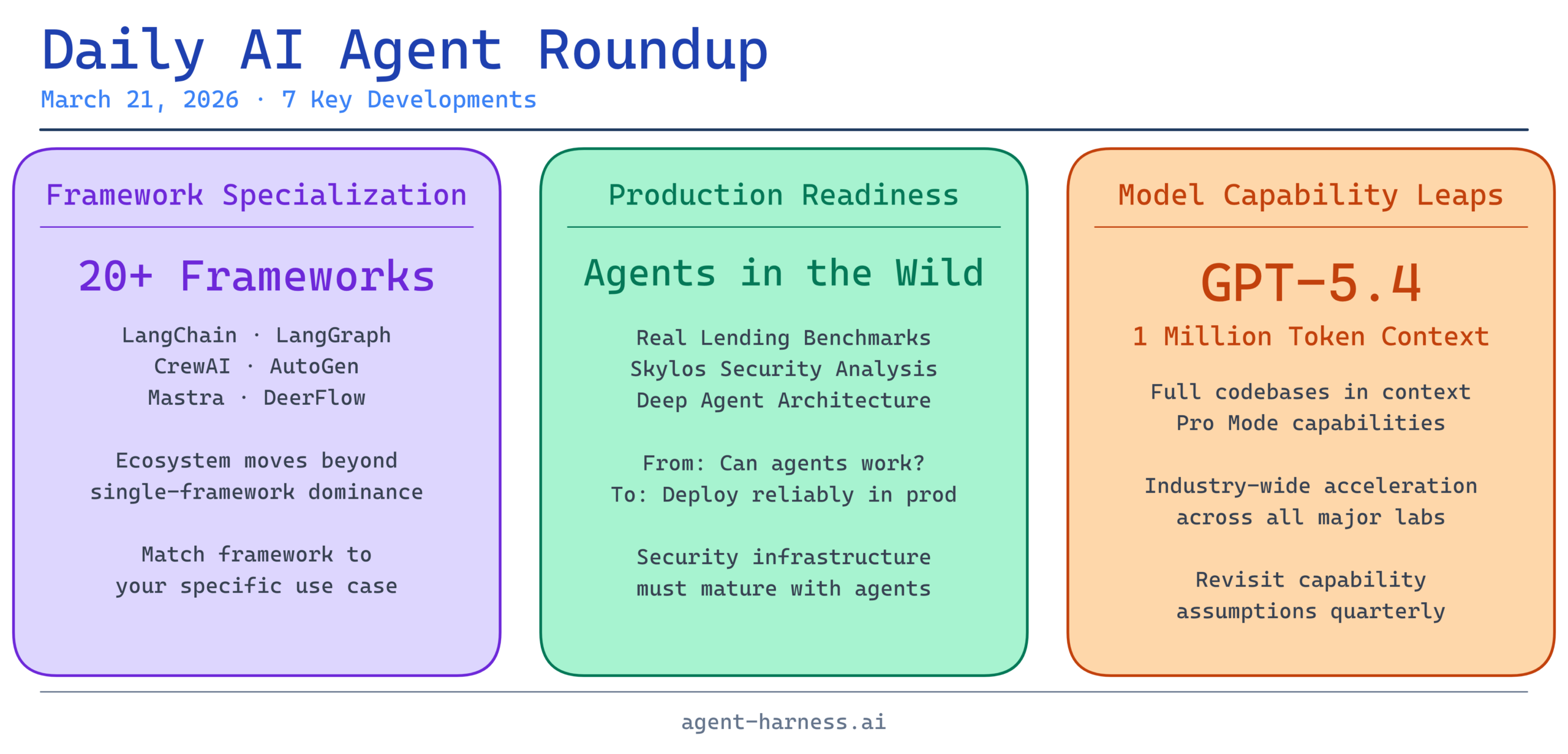

Three themes emerge from today’s news:

Framework Specialization: The AI agent framework ecosystem has moved beyond “one framework to rule them all.” Teams now choose frameworks optimized for their specific use case—orchestration, state management, security, or domain specialization.

Production Readiness: The convergence of real-world benchmarks (lending workflows), security tools (Skylos), and architectural discussions (deep agents) signals the community’s maturation from “can agents work?” to “how do we deploy agents reliably in production?”

Model Capability Jumps: GPT-5.4’s 1 million token window and competing improvements from other labs are expanding what agents can accomplish. Teams that were sidelined by token limitations can now revisit previously impossible applications.

For AI agent developers and teams evaluating agent technology, today’s updates reinforce that the ecosystem is moving rapidly from experimental to production-grade. The frameworks are maturing, the security tooling is improving, the benchmarks are coming, and the underlying models are becoming more capable. If you’ve been waiting for agents to mature, the moment is now—but success requires choosing the right framework, architecture, and approach for your specific problem.

Stay tuned to Agent Harness for tomorrow’s roundup. What AI agent developments are you tracking? Share your thoughts in the comments.