The AI agent landscape continues to evolve at a breakneck pace, with production deployments becoming increasingly sophisticated and critical to enterprise operations. Today’s roundup spans essential lessons from real-world implementations, emerging security challenges, workforce integration strategies, and the operational frameworks that enable teams to scale AI agents effectively. Whether you’re building agents for coding, office automation, or specialized tasks, these insights offer guidance for navigating the rapidly maturing AI agent ecosystem.

1. Lessons From Building and Deploying AI Agents to Production

Drawing from real-world implementations, this resource distills critical lessons learned when taking AI agents from concept to production at scale. The video explores the practical challenges teams encounter when moving beyond prototypes, including evaluation methodologies, integration patterns, and operational considerations that separate successful deployments from failed experiments.

Analysis: As AI agents transition from research projects to critical business infrastructure, capturing and sharing these hard-won lessons becomes invaluable. Teams struggling with production deployment bottlenecks benefit enormously from understanding what works in practice versus what looks good in a demo. These real-world case studies help organizations accelerate their timelines while avoiding expensive mistakes.

2. Test Your AI Agents Like a Hacker – Automated Prompt Injection Attacks

Security vulnerabilities in AI agents represent an emerging frontier in cybersecurity, with prompt injection attacks posing real threats to deployed systems. This content explores how adversaries can manipulate agent behavior through carefully crafted inputs, and demonstrates automated testing approaches to identify and remediate these vulnerabilities before agents reach production.

Analysis: As AI agents gain access to sensitive systems, data, and decision-making authority, their security posture becomes non-negotiable. Organizations deploying agents must adopt a “test like a hacker” mentality, treating prompt injection with the same rigor traditionally applied to SQL injection or XSS attacks. This represents a fundamental shift in how we approach AI safety—moving from theoretical exercises to practical, adversarial testing in operational contexts.

3. AI Agents Just Went From Chatbots to Coworkers

Recent announcements from major technology companies underscore a fundamental shift in AI deployment strategies, with agents moving beyond conversational interfaces into integrated workforce roles. These developments signal that organizations now expect AI agents to perform substantive work, collaborate with human teams, and take responsibility for meaningful business outcomes rather than merely answering questions.

Analysis: This transition represents a maturity milestone for the industry. When AI agents evolve from specialized tools to general coworkers, the expectations around reliability, consistency, and accountability fundamentally change. Organizations must now treat agent onboarding, performance management, and integration with human workflows with the same rigor applied to human hiring and team dynamics.

4. How I Eliminated Context-Switch Fatigue When Working with Multiple AI Agents in Parallel

Managing multiple concurrent AI agents introduces operational complexity that teams often underestimate, with cognitive load and coordination challenges potentially limiting agent deployment scale. This community-sourced insight shares practical strategies for orchestrating multiple agent instances without experiencing the mental fatigue that typically accompanies parallel context switching.

Analysis: As teams deploy more agents to handle diverse workstreams, the problem of coordinating between them becomes increasingly acute. Solutions that reduce cognitive overhead—whether through better interfaces, automated handoffs, or clearer state management—directly increase what teams can accomplish. This represents an often-overlooked but critical aspect of harness engineering that enables teams to operate at scale.

5. Microsoft Just Launched an AI That Does Your Office Work for You — Built on Anthropic’s Claude

Microsoft’s launch of Copilot Cowork exemplifies the enterprise AI agent trend, delivering an AI system purpose-built for everyday office tasks with the sophistication to actually reduce workload rather than merely augment it. The fact that this solution leverages Anthropic’s Claude model highlights how the foundation model landscape shapes what’s possible in agent-based applications.

Analysis: When technology giants begin shipping AI agents for everyday work, it signals that the technology has reached a reliability threshold acceptable to enterprise customers. This launch particularly matters because it demonstrates that agent sophistication and user accessibility aren’t mutually exclusive—consumers should expect both polish and capability. For engineering teams building agents, this raises the bar for what “production-ready” means.

6. Building AI Coding Agents for the Terminal: Scaffolding, Harness, Context Engineering

This deep-dive explores the specialized engineering required to deploy AI agents in terminal environments, where the consequences of errors are often immediate and the requirements for context accuracy are stringent. The content addresses how scaffolding, harness engineering, and context engineering work in concert to enable agents that can reliably handle complex coding tasks.

Analysis: Terminal-based AI agents represent a particularly challenging deployment context, where agents must understand complex domain knowledge while operating in high-risk environments. The emphasis on harness engineering here underscores a crucial insight: the quality of the framework surrounding the agent often matters more than the underlying model. Well-designed harnesses that constrain agent behavior in productive ways enable safer, more reliable automation.

7. Harness Engineering: Supervising AI Through Precision and Verification

Moving beyond simple instruction-following, this content explores how harness engineering enables meaningful supervision of AI systems through verification mechanisms and precision constraints. The framework presented distinguishes between different supervision models and explores how precision—in prompts, in verification approaches, and in outcome measurement—directly correlates with AI reliability and trustworthiness.

Analysis: Harness engineering has emerged as perhaps the most underappreciated discipline in practical AI deployment. While foundation models capture headlines, it’s the careful engineering of constraints, verification loops, and output validation that transforms raw AI capabilities into reliable business systems. Teams that invest deeply in harness engineering consistently outperform those that treat it as an afterthought, especially at scale.

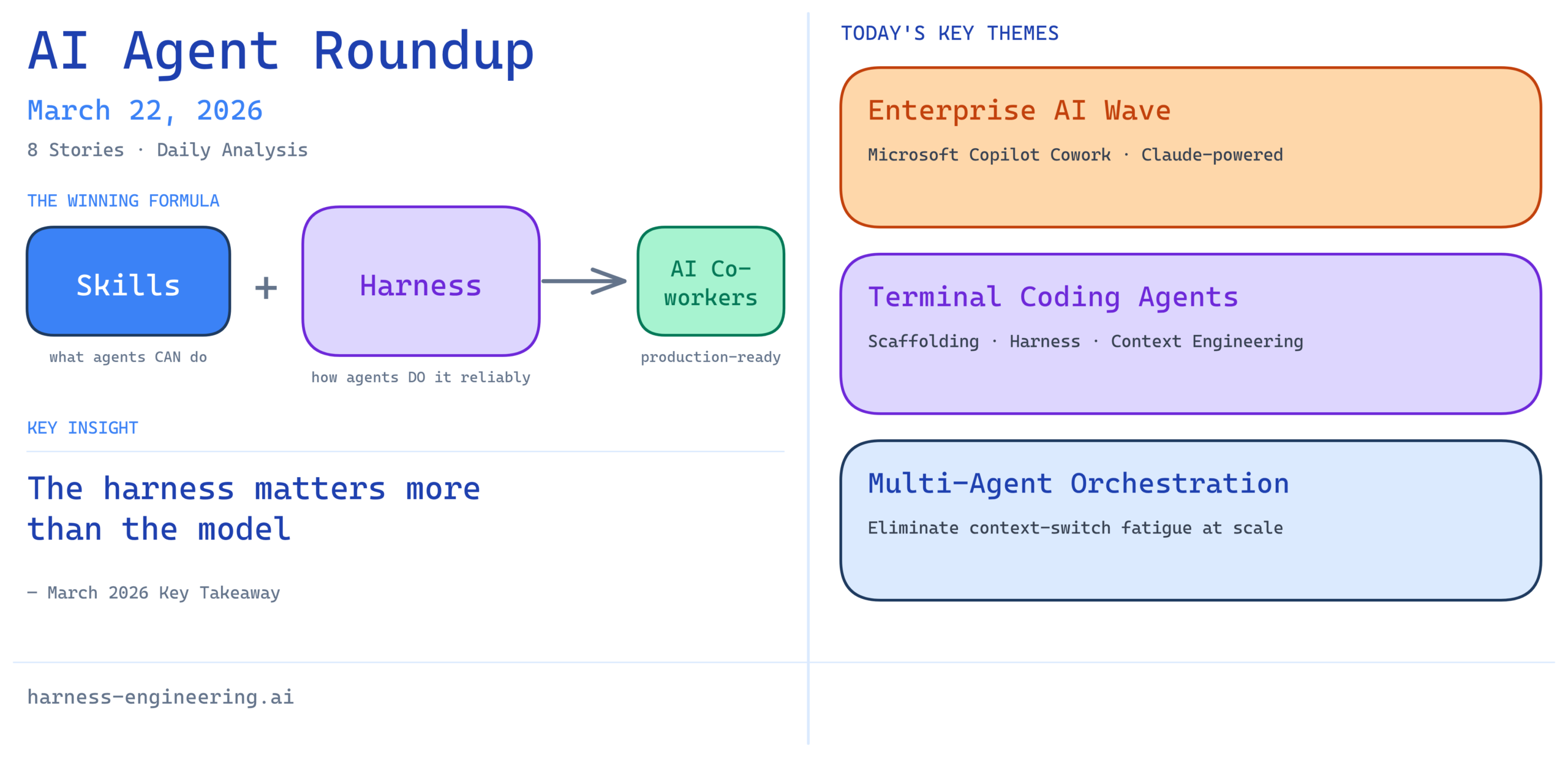

8. AI Agents: Skill & Harness Engineering Secrets Revealed

This concise exploration of skill and harness engineering reveals the often-hidden interdependencies that determine whether AI agents succeed or fail in practice. The content highlights how both skill engineering (configuring what agents can do) and harness engineering (controlling how they do it) require deep understanding and careful calibration.

Analysis: The framing of “skills” and “harnesses” as complementary engineering disciplines reflects a maturation in how the industry thinks about AI agent architecture. Skills without harnesses lead to unpredictable behavior; harnesses without meaningful skills constrain capability unnecessarily. The best agent systems reflect careful thought about this balance, often revealing insights applicable across the broader AI engineering landscape.

Today’s Takeaway

The daily news landscape for AI agents reveals an industry in transition—from experimental proofs-of-concept to production-grade systems that carry real responsibility. What emerges across today’s stories is a consistent theme: success with AI agents requires rigorous engineering discipline alongside technical capability. Whether it’s securing agents against prompt injection attacks, orchestrating multiple concurrent instances, or carefully engineering the harnesses that constrain and guide agent behavior, the winners in this space will be organizations that treat AI agent deployment with the same engineering rigor applied to critical infrastructure.

The most striking pattern is the gap between what’s technically possible with foundation models and what’s reliably achievable through careful harness engineering. As AI agents become coworkers rather than tools, this gap only widens—reliability, safety, and predictability matter more than raw capability. For engineering teams building agents today, these stories collectively suggest that investment in harness engineering, verification frameworks, and operational sophistication will prove far more valuable than chasing the latest model improvements.

Next Steps for Engineering Teams:

– Review your agent testing practices against the security standards highlighted in today’s content

– Audit your harness engineering approaches for precision and verification capability

– Consider how your team will manage coordination challenges as agent deployments scale

– Prioritize operational readiness alongside capability development

Stay tuned to harness-engineering.ai for tomorrow’s essential AI agent insights.