By Jamie Park — Educator and Career Coach at Harness Engineering Academy

When people first encounter a large language model, the interaction feels almost magical. You type something in, and something intelligent comes back. But here is the truth that separates casual users from real AI engineers: what you put in determines almost everything about what comes out.

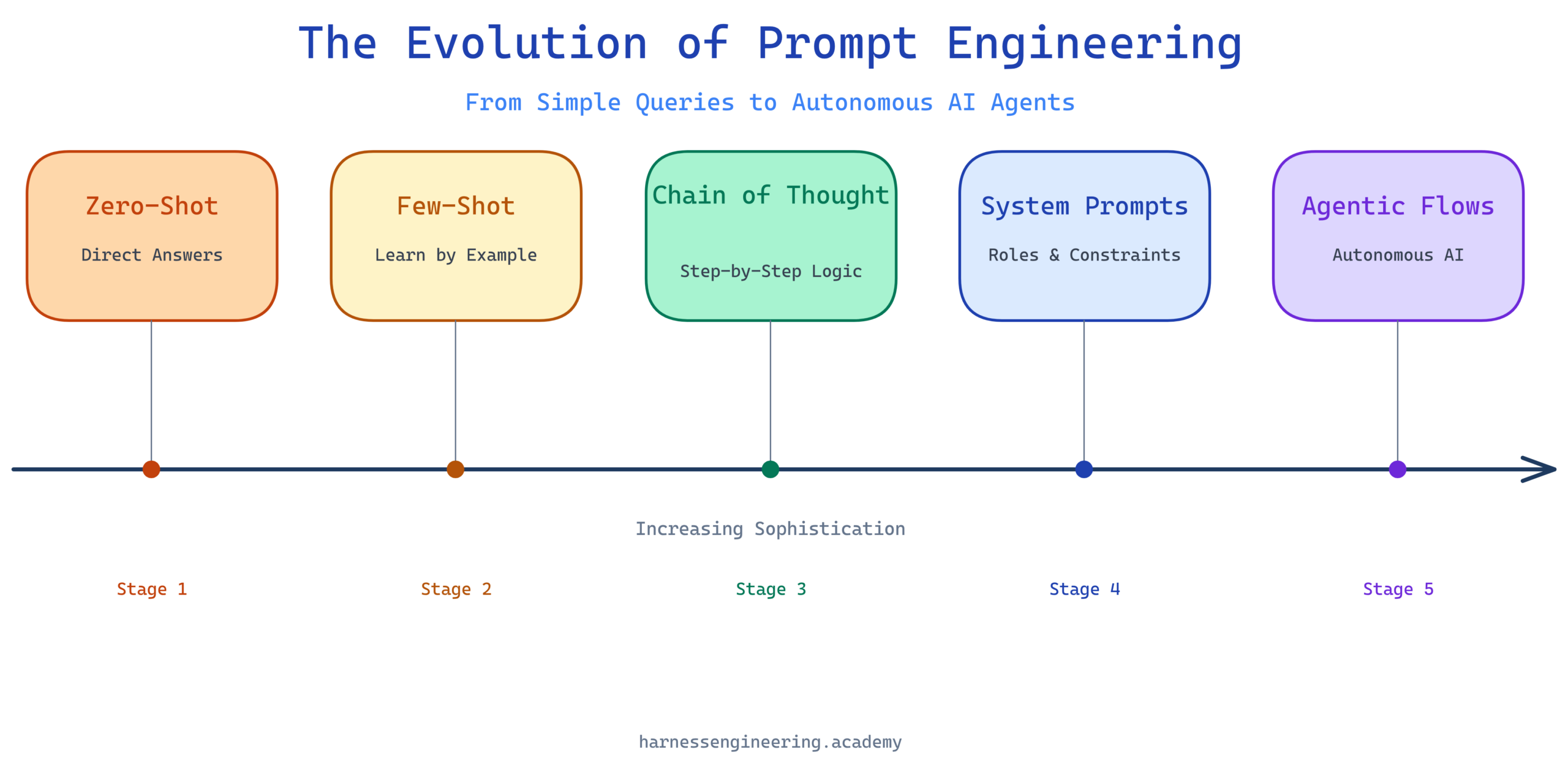

Prompt engineering has come a long way from the days of typing a simple question into a chat box. Today, skilled AI engineers architect entire conversation flows, manage context windows deliberately, chain reasoning steps, and design multi-agent systems that coordinate across tools and APIs. If you are just getting started, do not feel overwhelmed — every expert started exactly where you are right now.

This guide walks you through the full arc of prompt engineering, from its earliest, simplest form all the way to the sophisticated context-aware and flow-based techniques powering the most capable AI agents being built today. By the end, you will have a clear mental model of how prompting has matured and a practical foundation to build on.

Where It All Began: The Zero-Shot Era

What Is a Zero-Shot Prompt?

The first thing most people do when they meet a language model is ask it a direct question. No preamble, no examples, no setup. Just a question.

This is called zero-shot prompting — “zero” because you are giving the model zero examples of what a good answer looks like.

Example:

Translate this sentence to French:

"The cat sat on the mat."

For simple, well-defined tasks, this works remarkably well. Models trained on vast amounts of text have seen countless translation examples during training, so they can answer accurately without any hand-holding.

But zero-shot prompting has real limits. The moment you ask for something nuanced — a specific tone, a structured output format, a multi-step reasoning process — a plain question often produces results that miss the mark. The model has no context for what “good” looks like in your specific situation.

Why Zero-Shot Falls Short

Imagine asking a new intern to write a client proposal. If you hand them a blank page and say “write me a proposal,” you will get something generic. But if you show them three winning proposals from your firm first, they immediately understand the style, structure, and tone you expect.

Language models work the same way. Zero-shot is the blank-page approach. It works for simple tasks, but complex work demands more.

The First Major Leap: Few-Shot Prompting

Teaching by Example

Few-shot prompting solves the blank-page problem by providing the model with a small number of worked examples before your actual request. You are essentially demonstrating the pattern you want followed.

Zero-shot example:

Classify the sentiment of this review: "The battery died after two hours."

Few-shot version:

Classify the sentiment of each review as Positive, Negative, or Neutral.

Review: "Absolutely love this product, works perfectly!"

Sentiment: Positive

Review: "It arrived damaged and customer service ignored my emails."

Sentiment: Negative

Review: "It does what it says it does, nothing more."

Sentiment: Neutral

Review: "The battery died after two hours."

Sentiment:

The model now has three anchors. It understands your classification labels, your format, and the calibration of your judgments. The output is dramatically more consistent and aligned with your expectations.

When to Use Few-Shot Prompting

Few-shot prompting shines whenever you need:

- Consistent formatting (structured JSON, specific markdown layouts)

- Custom classification categories that may not be obvious from context

- Tone and style matching (writing in the voice of a specific brand or persona)

- Edge case handling (showing how the model should treat ambiguous inputs)

A good rule of thumb: if you find yourself correcting the model’s output format repeatedly, it is a signal to switch to few-shot and show it exactly what you want.

Adding Depth: Chain-of-Thought Prompting

The Power of Thinking Out Loud

One of the most impactful discoveries in applied prompt engineering is chain-of-thought (CoT) prompting. The core idea is simple: instead of asking a model to jump straight to an answer, you encourage it to reason through the problem step by step.

This matters enormously for tasks involving logic, math, multi-step analysis, or any problem where the correct answer depends on getting intermediate steps right.

Without chain-of-thought:

A store sells apples for $0.50 each and oranges for $0.75 each.

If Sarah buys 4 apples and 3 oranges, how much does she spend in total?

The model might answer $3.50 (incorrect) because it rushes to a conclusion.

With chain-of-thought:

A store sells apples for $0.50 each and oranges for $0.75 each.

If Sarah buys 4 apples and 3 oranges, how much does she spend in total?

Let's think through this step by step.

Now the model is prompted to work methodically:

– 4 apples x $0.50 = $2.00

– 3 oranges x $0.75 = $2.25

– Total = $4.25

The correct answer emerges naturally from the reasoning process.

Zero-Shot Chain-of-Thought

A particularly elegant shortcut discovered by researchers is appending a single phrase to trigger step-by-step reasoning without writing out full examples:

Solve this problem. Think step by step before giving your final answer.

This simple addition can improve performance on complex reasoning tasks by a significant margin. If you are not already adding reasoning scaffolding to your prompts for multi-step tasks, start today.

The Architecture Layer: System Prompts

Setting the Stage Before the Conversation Begins

As language model APIs became more accessible, engineers gained access to something powerful: the system prompt — a separate instruction block that precedes the conversation and sets the model’s overall behavior, persona, constraints, and context.

Think of the system prompt as the briefing you give an employee before they walk into a client meeting. It establishes who they are, what they know, how they should behave, and what they should never say.

A basic system prompt:

You are a helpful customer support assistant for TechFlow, a software company

that makes project management tools. You are friendly, concise, and always

escalate billing issues to the human support team. You never discuss competitor

products or make promises about future features.

Every message the user sends is now filtered through this lens. The model is no longer a general-purpose assistant — it is a focused, constrained agent with a clear role.

What Goes Into a Strong System Prompt

Effective system prompts typically cover several layers:

1. Role and identity — Who is this assistant and what is its purpose?

2. Knowledge scope — What should it know, and what should it acknowledge it does not know?

3. Behavioral constraints — What should it never do? (Refusals, guardrails, topic restrictions)

4. Output format preferences — Should responses be bullet points? Short paragraphs? JSON?

5. Tone and communication style — Formal or casual? Technical or plain language?

6. Escalation and fallback behavior — What should it do when it hits a question outside its scope?

As you build more sophisticated AI products, system prompt design becomes a craft in itself. Small changes in wording can have outsized effects on model behavior — and testing your system prompts systematically is a skill every serious AI engineer needs to develop.

The Memory Problem: Understanding Context Windows

What Is a Context Window?

Every language model has a context window — the total amount of text it can “see” and reason over at one time. Early models had context windows of a few thousand tokens (roughly equivalent to a few pages of text). Modern models have extended this to hundreds of thousands of tokens, with some approaching millions.

This has profound implications for prompt engineering. The context window is your workspace. Everything the model knows about your current task must fit inside it: the system prompt, the conversation history, any documents you have injected, the user’s current message, and the model’s response.

Why Context Management Is a Core Engineering Skill

As conversations grow longer or as you inject more background documents, you will eventually bump against context limits. But even before hitting the hard limit, there is a subtler problem: relevance dilution. When a context window is full of tangentially related information, the model’s attention gets spread thin and its performance on your core task degrades.

This means context management is not just a technical constraint — it is a design challenge. Skilled prompt engineers ask:

- What information does the model actually need right now?

- Can I summarize earlier conversation turns to free up space?

- Should I use retrieval (pulling relevant chunks from a database) rather than stuffing everything into the prompt?

- How do I structure information so the model finds what it needs quickly?

Practical Context Management Techniques

Summarization: Periodically compress older conversation turns into a concise summary and replace the raw history with it.

Retrieval-augmented generation (RAG): Rather than loading entire documents, retrieve only the specific passages relevant to the current query and inject those.

Structured injection: When you do inject context, organize it clearly with labels and delimiters so the model can navigate it:

[USER PROFILE]

Name: Alex Chen

Plan: Professional

Account age: 14 months

Recent issues: None

[END USER PROFILE]

[CURRENT TICKET]

Subject: Can't export reports to CSV

Priority: Medium

[END CURRENT TICKET]

Conversations as Engineering: Multi-Turn Dialogue Design

Beyond Single Exchanges

The earliest prompt engineering was transactional — one input, one output, done. Modern AI applications are conversational — the model and user exchange multiple turns, and the quality of that ongoing dialogue determines the user experience.

Designing good multi-turn conversations requires thinking about:

Turn structure: How does each exchange build on the previous one? Is the model maintaining the right state?

Clarification loops: When should the model ask follow-up questions rather than making assumptions?

Graceful topic transitions: How does the model handle a user who shifts from one topic to another mid-conversation?

Memory and callbacks: Can the model correctly reference something the user mentioned five turns ago?

A Real-World Multi-Turn Example

Here is a simplified customer onboarding flow showing how turn design matters:

Turn 1 — User: “I want to set up my account.”

Model: “Great, let’s get started! What industry is your team in?”

Turn 2 — User: “We are in healthcare.”

Model: “Perfect. Healthcare teams often need our HIPAA compliance settings enabled. Would you like me to walk you through that setup, or would you prefer to start with the basic account configuration first?”

Turn 3 — User: “Let’s do basic setup first.”

Model: “Sounds good. How many team members will be using the platform?”

Each turn does meaningful work: gathering information, surfacing relevant options, and guiding the user toward a goal. This is conversation design — and it is as much a product skill as a technical one.

The Frontier: Agentic Flows and Multi-Step Orchestration

From Conversations to Workflows

The most exciting development in modern prompt engineering is the shift from conversational AI to agentic AI — systems that do not just respond but take actions, use tools, and complete multi-step workflows autonomously.

In an agentic flow, a language model might:

- Receive a high-level goal from a user (“Research the top five competitors and summarize their pricing”)

- Decide which tools to use (web search, document reader, data formatter)

- Execute those tools in sequence (or in parallel)

- Evaluate its own intermediate outputs

- Decide whether to loop back, continue, or surface a result to the user

This is fundamentally different from the zero-shot era. The model is no longer answering a question — it is executing a plan.

Prompt Engineering Inside Agentic Systems

Agentic architectures introduce new prompt engineering challenges:

Tool-use prompting: You need to clearly describe what tools are available, when to use each one, and how to format tool calls. Ambiguous tool descriptions lead to wrong tool choices.

Self-evaluation prompts: Agents often need to check their own work. Prompts like “Review your previous output. Does it fully answer the original question? If not, what is missing?” can dramatically improve output quality.

Handoff prompts: In multi-agent systems where one agent passes work to another, the handoff message must contain exactly the right context — not too much, not too little — for the receiving agent to continue effectively.

Goal decomposition: Agents often receive complex, open-ended goals. Prompts that guide structured decomposition (“Break this goal into a numbered list of concrete subtasks”) help avoid vague, wandering execution.

A Simple Agentic Flow Example

Here is a minimal example of what a prompt chain for an agentic research task might look like:

Prompt 1 — Task decomposition:

You are a research agent. Your goal is: [GOAL].

Break this into a list of 3-5 specific subtasks, each of which can be

completed with a single web search or document lookup.

Prompt 2 — Execution (per subtask):

You are executing subtask: [SUBTASK].

Use the web_search tool to find relevant information.

Return a concise summary of findings (3-5 sentences) with sources.

Prompt 3 — Synthesis:

You have completed the following research subtasks:

[SUBTASK 1]: [FINDINGS]

[SUBTASK 2]: [FINDINGS]

[SUBTASK 3]: [FINDINGS]

Synthesize these findings into a coherent summary addressing the original goal: [GOAL].

Be concise, accurate, and cite your sources.

Each prompt in this chain is simple and focused. The complexity lives in the architecture — how you sequence, route, and connect these prompts — not in any single prompt’s length or complexity.

Pulling It All Together: The Prompt Engineering Maturity Model

Looking back at everything we have covered, you can see a clear progression:

| Stage | Technique | What It Solves |

|---|---|---|

| 1 | Zero-shot | Getting a quick, direct answer |

| 2 | Few-shot | Consistent format and calibration |

| 3 | Chain-of-thought | Complex reasoning and logic |

| 4 | System prompts | Persistent behavior and role definition |

| 5 | Context management | Long conversations and document-heavy tasks |

| 6 | Multi-turn design | Rich, goal-oriented dialogues |

| 7 | Agentic flows | Autonomous multi-step task execution |

You do not need to master all seven levels before you start building. Most real-world projects begin at levels 1 through 3, and you layer in more sophistication as the complexity of your use case demands it. The key is knowing which tool to reach for and why.

Practical Next Steps for Building Your Skills

If you are just starting out, here is a simple progression to follow:

Week 1 — Get comfortable with zero-shot and few-shot. Pick a task you care about (summarizing articles, classifying feedback, drafting emails) and experiment with how examples change the output. Keep a log of what works.

Week 2 — Introduce chain-of-thought. Take a problem that requires multiple steps — a calculation, a decision framework, a structured analysis — and practice adding reasoning scaffolding to your prompts.

Week 3 — Design a system prompt. Pick a simple assistant use case (a cooking helper, a code reviewer, a writing coach) and write a thorough system prompt. Test it with edge cases. Refine it.

Week 4 — Extend to multi-turn. Build a short conversation flow with at least five turns. Map out what each turn needs to accomplish. Identify where the model gets confused and adjust.

Month 2 and beyond — Explore agentic patterns. Start with simple tool-use examples. Learn how to structure tool descriptions. Build a small workflow that chains two or three prompts together.

Why Prompt Engineering Is a Career-Defining Skill Right Now

We are at an inflection point. The demand for people who can design, evaluate, and optimize prompt systems is outpacing the supply of qualified engineers by a wide margin. Organizations are building AI-powered products across every industry, and the difference between a mediocre AI product and an exceptional one often comes down to the quality of the prompt engineering underneath it.

This is not a niche skill set that will become obsolete. It is a foundational layer of how humans and AI systems collaborate — and that collaboration is only going to deepen over the next decade.

The engineers who build deep fluency now, while the field is still maturing, will be positioned as the architects of the next generation of AI systems. The investment you make in understanding these concepts today compounds over time.

Ready to Go Deeper?

This article has given you the conceptual map. Now it is time to walk the territory.

At Harness Engineering Academy, we have designed a complete learning path for aspiring AI agent engineers — from your very first prompt to deploying production-grade agentic systems. Our courses are built for real-world application, not just theory. Every module includes hands-on exercises, community feedback, and clear milestones you can point to in your portfolio.

Whether you are a developer looking to transition into AI engineering, a product manager who wants to work more effectively with AI teams, or a career changer building your technical foundation, we have a path for you.

Start your journey today:

- Explore our Prompt Engineering Fundamentals course — perfect for beginners who want a structured foundation

- Level up with our Agentic AI Engineering certification — the credential that signals real-world readiness to employers

- Join our community of learners building and sharing AI projects every day

The field is moving fast. The best time to start was yesterday. The second best time is right now.

Visit harnessengineering.academy to explore all courses, certifications, and learning paths.

Jamie Park is an educator and career coach at Harness Engineering Academy, specializing in helping newcomers and career changers build practical AI engineering skills. Jamie’s tutorials focus on making complex concepts approachable without sacrificing depth.