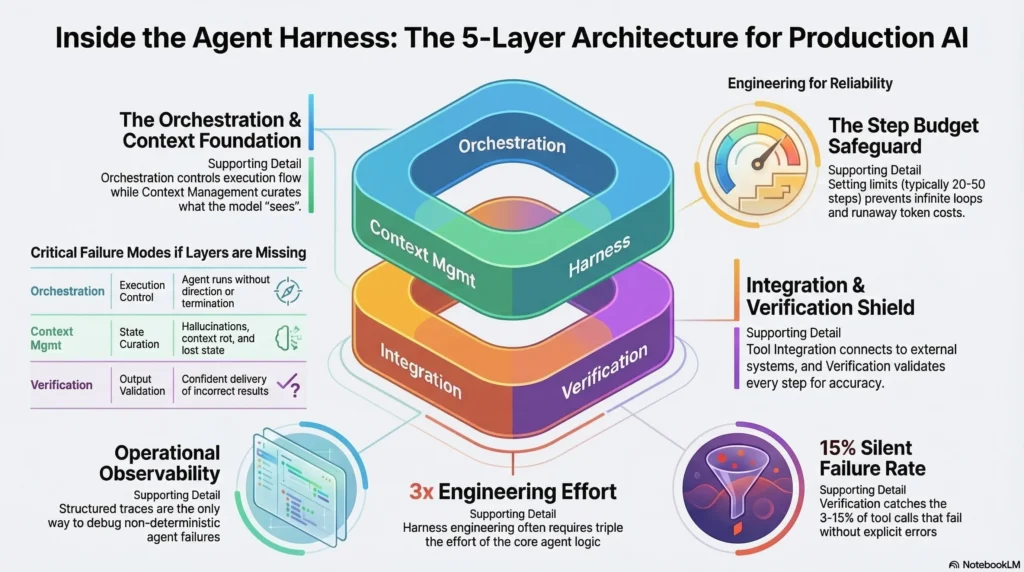

Anthropic defines an agent harness as “an operational structure that enables AI models to work across multiple context windows on extended tasks.” That definition captures the scope but not the engineering. Under the hood, a production agent harness is a five-layer architecture where each layer handles a specific category of problems that the model cannot solve on its own.

This article breaks down the architecture layer by layer. If you have read the complete guide to agent harness or the introduction to harness engineering, this goes deeper into how the components fit together and why each layer exists.

Interactive Concept Map

Click any node to expand or collapse. Use the controls to zoom, fit to view, or go fullscreen.

The Five-Layer Architecture

A production agent harness has five distinct layers, each solving a different class of problem. Skip any layer and you create a specific, predictable failure mode.

| Layer | Purpose | What Breaks Without It |

|---|---|---|

| 1. Orchestration | Controls agent execution flow | Agent runs without direction or termination |

| 2. Context Management | Curates what the model sees | Hallucination, context rot, lost state |

| 3. Tool Integration | Connects agent to external systems | Tool call failures cascade silently |

| 4. Verification | Validates outputs at each step | Wrong results delivered with confidence |

| 5. Operations | Monitors, controls costs, handles failures | Runaway costs, silent degradation, no debugging |

Layer 1: Orchestration

The orchestration layer decides when to call the model, what to do with its output, and when to stop. This is the control plane for the agent.

Core Components

Execution loop. The fundamental cycle: present context to the model, receive a response, determine whether the response is a final answer or an action to take, execute the action, and loop. The loop needs explicit termination conditions. Without them, agents can loop indefinitely, consuming tokens and producing nothing useful.

Step budget. A hard limit on how many steps the agent can take per task. This prevents infinite loops and bounds cost. Most production systems set step budgets between 20 and 50 steps depending on task complexity.

Decision router. After each model response, the router determines the next action: call a tool, ask a clarifying question, deliver a final answer, or escalate to a human. In multi-agent systems, the router also decides which specialized agent should handle the current subtask.

State machine. Production agents operate as state machines with defined transitions. Planning, executing, evaluating, recovering, and completing are distinct states with different context requirements and different available actions. State machines prevent agents from taking actions that are inappropriate for their current phase.

Orchestration Patterns

Single-agent loop. The simplest pattern. One model, one execution loop, one set of tools. Suitable for most production use cases. Always start here.

Orchestrator-workers. A central agent delegates subtasks to specialized agents. The orchestrator manages the overall plan while workers handle specific domains. Effective when tasks require distinct expertise but adds complexity. Use at most two levels of hierarchy; deeper nesting makes debugging nearly impossible.

Pipeline. Sequential agents where each processes the output of the previous one. Useful for tasks with clear stages like research, analysis, and writing. Simpler to debug than orchestrator-workers because the flow is linear.

Layer 2: Context Management

The context management layer curates what the model sees at each step. This is where context engineering becomes infrastructure.

Core Components

System prompt assembly. The base instructions for the model, including its role, constraints, available tools, and output format requirements. In production harnesses, system prompts are assembled dynamically based on the current task type, available tools, and execution state.

Conversation history management. As agents operate over multiple turns, the conversation history grows. Without active management, old, irrelevant turns crowd out important context. Production harnesses implement sliding windows, summarization, or selective retention to keep the history relevant and within token budgets.

Retrieval pipeline. When the agent needs information beyond what is in the conversation, the retrieval pipeline fetches it. This includes RAG (retrieval from vector databases), file system access, API queries, and database lookups. The pipeline handles not just retrieval but also formatting, deduplication, and relevance scoring.

Cross-session state. When a task spans multiple context windows, the harness manages continuity. Progress files document completed work and pending tasks. Checkpoint mechanisms save the agent’s state at defined points. When a new session begins, the harness reconstructs context from these artifacts rather than starting from scratch.

Token budget allocation. Each component of the context window has a token budget: system prompt, conversation history, retrieved documents, tool results, and the current query. The harness monitors actual consumption against these budgets and takes action (summarizing, truncating, or alerting) when components exceed their allocation.

Layer 3: Tool Integration

Agents interact with the world through tools. The tool integration layer makes these interactions reliable.

Core Components

Tool definitions. Each tool needs a precise description that tells the model what it does, what parameters it accepts, and what it returns. Poor tool descriptions are one of the most common causes of agent failures. The description is the model’s only interface to the tool. If it is ambiguous, the model will call the tool incorrectly.

Parameter validation. Before executing a tool call, the harness validates that the model provided all required parameters in the correct format. This catches malformed tool calls before they hit the external API.

Execution sandbox. Tool calls that interact with external systems run in a controlled environment. Sandboxing limits what the tool can access, prevents the agent from modifying systems it should not, and contains failures. For coding agents, this means running generated code in isolated containers. For data agents, this means read-only database access unless explicitly authorized.

Response normalization. External APIs return data in different formats. The tool integration layer normalizes responses into a consistent structure that the model can process. This includes error standardization: whether a tool returns a 500 error, a timeout, or malformed JSON, the agent sees a consistent error format with actionable information.

Rate limiting and queuing. External APIs have rate limits. The harness manages call frequency, implements queuing for burst traffic, and handles rate limit errors with appropriate backoff.

Layer 4: Verification

The verification layer validates agent behavior at every step. This is the layer that makes the difference between a demo and a production system.

Core Components

Tool call verification. After every tool call, the harness validates the response: did it succeed? Does it match the expected schema? Are required fields present? Are values within reasonable bounds? This catches the 3-15% of tool calls that fail silently.

Output verification. Before delivering results to users, the harness applies quality checks: is the output relevant to the query? Does it contain hallucinated claims? Does it meet format requirements? Model-based graders handle quality assessment; code-based graders handle structural validation.

Trajectory evaluation. Beyond individual steps, the harness evaluates the full sequence of actions. Was the reasoning path efficient? Did the agent use appropriate tools? Did it recover correctly from errors? Trajectory evaluation catches failure patterns that step-level checks miss.

Confidence thresholds. The harness monitors signals that indicate agent uncertainty: multiple tool call retries, contradictory reasoning steps, or outputs that fail soft quality checks. When confidence drops below a threshold, the harness routes to human review rather than delivering a questionable result.

Retry and fallback logic. When a step fails verification, the harness determines the response: retry with modified context, fall back to an alternative approach, or escalate. This logic is deterministic, not left to the model’s judgment.

Layer 5: Operations

The operations layer provides the infrastructure for running agents in production: monitoring, cost control, and failure management.

Core Components

Observability. Every agent step is instrumented with structured traces that capture: what the model was asked, what it responded, which tools were called, what they returned, and how long each step took. When an agent fails in production, these traces are how you debug it. Without observability, debugging non-deterministic systems is essentially impossible.

Cost controls. Token budgets at the request level, the session level, and the daily level prevent runaway spending. Circuit breakers halt execution when costs exceed thresholds. Real-time spending monitors alert operators before budgets are exhausted. Multi-agent systems consume roughly 15x the tokens of single-agent systems, making cost controls essential at scale.

Graceful degradation. When the model produces low-confidence output, when a critical tool is down, or when the task exceeds the agent’s capabilities, the system degrades gracefully rather than failing silently. This means: escalating to a human operator, falling back to a deterministic workflow, or delivering a partial result with a clear explanation of what could not be completed.

Evaluation pipeline. Continuous evaluation runs against production traffic. A percentage of interactions are sampled, evaluated asynchronously using golden datasets and model-based graders, and scored against quality thresholds. When scores drift, alerts trigger investigation.

Alerting and incident response. Production agents need the same operational discipline as any production system: alerting on anomalous behavior, runbooks for common failure modes, and incident response procedures. The difference is that agent failures are often non-deterministic and harder to reproduce, requiring trajectory analysis rather than traditional log analysis.

How the Layers Interact

The layers are not independent. They form an integrated system where each layer depends on the others.

The orchestration layer calls the model. The context management layer prepares what the model sees. The model responds with either a final answer or a tool call. The tool integration layer executes the tool call and normalizes the response. The verification layer validates the response. If validation fails, the orchestration layer retries or escalates. Throughout the entire process, the operations layer monitors, measures, and controls.

A request flows through all five layers on every single step. For a 20-step agent task, this means 20 orchestration decisions, 20 context assemblies, potentially 20 tool calls with validation, 20 verification checks, and continuous operational monitoring. The harness does more work than the model on every task.

Frequently Asked Questions

Do I need all five layers for a simple agent?

For a demo or internal tool, you can skip layers 4 and 5. For any agent that serves customers or handles important tasks, you need all five. The layers you skip are the layers that will fail you in production.

How much engineering effort does the harness require?

OpenAI’s Codex team reported that harness engineering constituted the majority of their development effort. Anthropic spent more time optimizing tools than prompts for their SWE-bench agent. Plan for the harness to require 2-3x the engineering effort of the agent logic itself.

Can I use a framework instead of building the harness myself?

Frameworks like LangChain, LangGraph, and CrewAI provide scaffolding for layers 1-3. Layers 4 and 5 typically require custom implementation because verification criteria and operational requirements are specific to your use case. Use frameworks for orchestration and tool integration. Build verification and operations yourself.

How does the harness relate to the model?

The model generates text. The harness handles everything else. In a well-designed system, the model is a component of the harness, not the other way around. The harness decides when to call the model, what context to provide, how to validate the output, and what to do when the output fails. The model is powerful but unreliable. The harness provides the reliability.

The Architecture in Practice

The five-layer harness architecture is not theoretical. It is the structure that production teams converge on when they move from demos to reliable systems. The specific implementations vary, but the layers are consistent because the problems they solve are universal.

Start with the orchestration layer: a simple loop with a step budget. Add context management for your specific use case. Integrate tools with validation. Build verification incrementally, starting with tool call validation and expanding to output and trajectory evaluation. Add operations last, starting with basic logging and cost controls.

The production deployment guide covers how to take this architecture from staging to production. Subscribe to the newsletter for weekly deep dives into each layer’s implementation patterns.

2 thoughts on “Agent Harness Architecture: How the System Works Under the Hood”