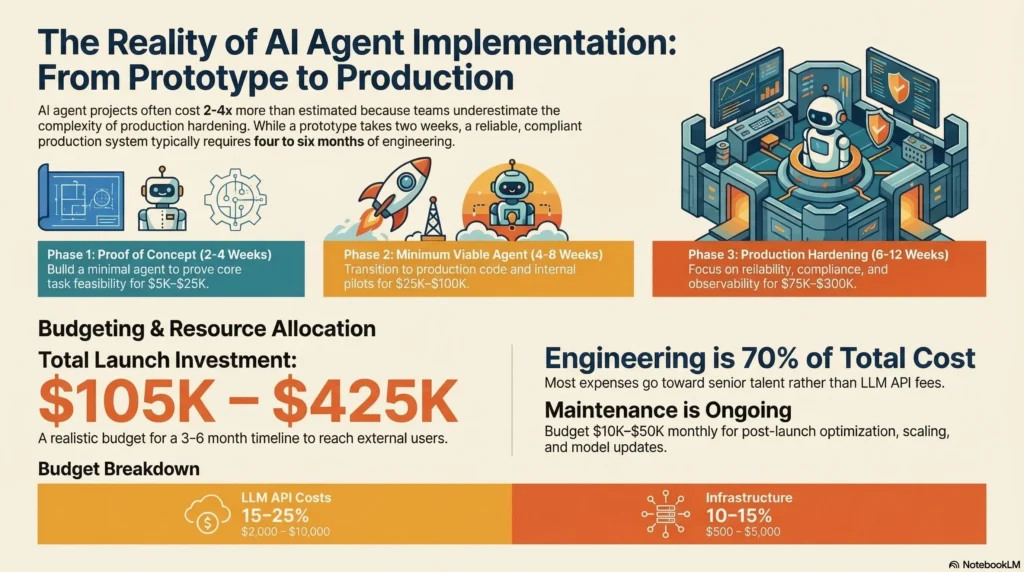

Most AI agent projects take 2-3x longer and cost 2-4x more than the initial estimate. Not because the technology is harder than expected, but because teams underestimate the gap between a working prototype and a production system that handles real users, real failures, and real compliance requirements.

The prototype works in two weeks. The production system takes four months. That’s not a failure of execution; it’s the nature of building reliable infrastructure for non-deterministic systems.

This guide provides realistic timelines and cost breakdowns based on production agent deployments across customer support, content generation, data processing, and internal automation use cases. Use it to set expectations with stakeholders before the project starts, not after the first deadline slips.

Interactive Concept Map

Click any node to expand or collapse. Use the controls to zoom, fit to view, or go fullscreen.

The four phases of agent implementation

Every successful agent deployment follows the same four-phase pattern, whether it takes two months or twelve.

Phase 1: Proof of concept (2-4 weeks)

Goal: Prove the agent can complete the core task with acceptable quality.

What you’re building: A minimal agent that handles the happy path. No error handling, no cost controls, no production infrastructure. Just the agent, a prompt, the necessary tools, and a handful of test cases.

Activities:

– Define the agent’s scope: what it does and doesn’t do

– Choose an LLM provider and model

– Build basic tool integrations (3-5 tools maximum)

– Write the system prompt and test against 20-30 examples

– Demonstrate to stakeholders with curated scenarios

Cost range: $5,000 – $25,000 (primarily engineering time, minimal LLM costs)

Team: 1-2 engineers, 1 domain expert (part-time)

Success criteria: The agent completes the core task in 80%+ of curated test cases. Stakeholders see enough potential to fund the next phase. Technical feasibility is confirmed.

Common mistakes: Spending too long on the POC. Optimizing prompts before validating the approach. Building production infrastructure too early. The POC should be disposable code that proves the concept, not the foundation of the production system.

Phase 2: Minimum viable agent (4-8 weeks)

Goal: Build an agent that can handle real inputs from a small group of internal users.

What you’re building: The core agent logic plus basic reliability patterns. Error handling for the most common failure modes. Simple logging and cost tracking. An evaluation dataset with 100+ examples.

Activities:

– Rebuild the agent with production-quality code (the POC is usually thrown away)

– Implement retry logic for API failures

– Add basic output validation (schema checks, content filters)

– Build a 100-example evaluation dataset from real scenarios

– Set up cost tracking and per-interaction logging

– Run an internal pilot with 5-10 users

– Iterate on prompts and tools based on pilot feedback

Cost range: $25,000 – $100,000 (engineering time + LLM costs for testing)

Team: 2-3 engineers, 1 domain expert, 1 product manager (part-time)

Success criteria: Internal users complete real tasks with the agent. Task completion rate exceeds 70%. No critical failures (data loss, security breaches, uncontrolled costs). Failure modes are documented and prioritized.

Common mistakes: Skipping the internal pilot and going straight to customers. Not building evaluation infrastructure. Under-investing in logging (you need data from this phase to make good decisions in the next phase).

Phase 3: Production hardening (6-12 weeks)

Goal: Make the agent reliable enough for external users.

What you’re building: The full harness layer. Comprehensive error handling, cost controls, observability, human-in-the-loop escalation, and compliance infrastructure. This is where most of the engineering investment goes.

Activities:

– Implement circuit breakers and graceful degradation

– Build cost controls: per-user budgets, model tiering, caching

– Add full observability: distributed tracing, metrics dashboards, alerting

– Implement compliance requirements: audit logging, PII handling, data retention

– Expand evaluation dataset to 300-500 examples

– Build CI/CD pipeline with automated evaluation on every change

– Set up monitoring and on-call procedures

– Conduct security review and penetration testing

– Run a limited external beta (50-100 users)

Cost range: $75,000 – $300,000 (heavy engineering investment + infrastructure costs)

Team: 3-5 engineers, 1 domain expert, 1 product manager, 1 ops/SRE engineer

Success criteria: Task completion rate exceeds 85% with external users. No critical failures during beta. Cost per interaction is within budget. Observability is sufficient to debug production issues. Compliance requirements are met.

Common mistakes: Underestimating this phase. Treating production hardening as “adding a few retries.” The reliability, cost, observability, and compliance work is genuinely complex. Teams that rush this phase pay for it with production incidents.

Phase 4: Scale and optimize (ongoing)

Goal: Improve quality, reduce costs, and handle increasing traffic.

What you’re building: Optimization layers, advanced features, and capacity planning for growth.

Activities:

– Model tiering optimization (route more traffic to cheaper models)

– Advanced caching strategies (semantic caching, conversation caching)

– Context engineering improvements (selective retrieval, token compression)

– Multi-agent coordination for complex workflows

– A/B testing prompt variations

– Regular evaluation dataset updates from production failures

– Capacity planning for traffic growth

– Feature expansion based on user feedback

Cost range: $10,000 – $50,000/month ongoing (engineering + LLM + infrastructure)

Team: 2-4 engineers (ongoing), 1 product manager

This phase never ends. Agent systems require continuous maintenance, optimization, and monitoring. Budget for ongoing operational costs, not just the initial build.

Total cost breakdown

| Phase | Timeline | Cost Range | Cumulative |

|---|---|---|---|

| POC | 2-4 weeks | $5K – $25K | $5K – $25K |

| MVA | 4-8 weeks | $25K – $100K | $30K – $125K |

| Production | 6-12 weeks | $75K – $300K | $105K – $425K |

| Scale | Ongoing | $10K – $50K/mo | + monthly |

| Total to launch | 3-6 months | $105K – $425K |

These ranges assume a mid-market company with standard compliance requirements. Startups building internal tools fall at the low end. Enterprises in regulated industries (finance, healthcare) fall at the high end or above.

Where the money goes

Engineering time (60-70% of total cost). Most of the cost is people, not technology. Senior engineers with agent infrastructure experience command $150-250K salaries. Harness engineering is specialized work, and the talent pool is small.

LLM API costs (15-25% of total cost). Development, testing, and evaluation require thousands of model calls. Production traffic adds ongoing costs. Budget $2,000-10,000/month for development and testing, plus per-interaction costs in production.

Infrastructure (10-15% of total cost). Vector databases for RAG, observability tools, compute for running the harness, and CI/CD infrastructure. Most teams use cloud services, which cost $500-5,000/month depending on scale.

What determines where you fall in the range

Low end ($105K, 3 months):

– Internal-facing agent with tolerant users

– No regulatory compliance requirements

– Simple use case (single agent, few tools)

– Team has prior agent infrastructure experience

– Low traffic (under 1,000 interactions/day)

High end ($425K+, 6+ months):

– Customer-facing agent with high quality bar

– Regulatory compliance (finance, healthcare, legal)

– Complex use case (multi-agent, many tools)

– Team learning agent infrastructure for the first time

– High traffic (10,000+ interactions/day)

The hidden costs teams forget

Evaluation infrastructure. Building and maintaining evaluation datasets, grading pipelines, and quality dashboards costs 10-15% of total project effort. Teams that skip this don’t have cheaper projects; they have projects that fail in production.

On-call operations. Agent systems need monitoring and incident response. Budget for on-call rotation, incident playbooks, and the engineering time to investigate production issues.

Model provider changes. LLM providers update models regularly. Each update can change agent behavior. Budget time for re-evaluation and prompt adjustment after every model update.

Scope creep. Stakeholders will request new capabilities after seeing the agent work. “Can it also handle billing disputes?” is a common expansion request that adds weeks of work. Manage scope actively.

Setting expectations with stakeholders

Share these timelines and costs early. The most common project failure isn’t technical; it’s a gap between stakeholder expectations and reality. Three principles:

The demo is not the product. A working prototype in two weeks does not mean production launch in four weeks. Explain the production hardening phase explicitly.

Quality takes evaluation infrastructure. You can’t know if the agent is “good enough” without evaluation data. Budget for building measurement before building features.

Costs are ongoing, not one-time. Agent systems have recurring costs: LLM API fees, infrastructure, monitoring, and engineering maintenance. Present these as monthly operational costs from the start.

For detailed ROI analysis of agent systems, read our AI agent ROI calculator. For the production architecture that supports these deployments, see our deployment operations guide.

Frequently asked questions

Can we skip the POC phase if we’re confident in the approach?

No. The POC reveals feasibility issues that no amount of planning uncovers. A two-week POC that reveals a fundamental limitation saves four months of wasted development. The POC isn’t about building; it’s about learning.

Why is production hardening the longest phase?

Because production systems handle every edge case, not just the happy path. Users send unexpected inputs. APIs have outages. Models produce inconsistent outputs. Each of these needs specific handling, and the number of edge cases is larger than teams expect.

How do I budget for ongoing LLM API costs?

Estimate your expected interaction volume. Multiply by your average tokens per interaction. Multiply by your model’s per-token price. Add a 50% buffer for retries, evaluation runs, and traffic growth. Review monthly and adjust. Most teams overestimate volume and underestimate per-interaction token usage.

What’s the biggest risk to timeline?

Scope expansion during development. The agent works for the initial use case, and stakeholders immediately want to add more capabilities. Each capability addition triggers another round of evaluation, reliability work, and testing. Define scope firmly at the start of each phase and treat additions as separate phases.

Subscribe to the newsletter for production deployment guides, architecture patterns, and implementation case studies.