If each step in your agent workflow is 95% reliable, which is optimistic for current LLMs, then a 20-step task succeeds only 36% of the time. That math is why AI agent testing is not optional. It is the difference between a demo that works and a production system that works.

Traditional software testing assumes deterministic behavior: given input X, the system produces output Y every time. Agents violate this assumption at every level. The same prompt produces different reasoning chains. The same tool call returns different results depending on external state. The same evaluation run scores differently because even the judge model is non-deterministic.

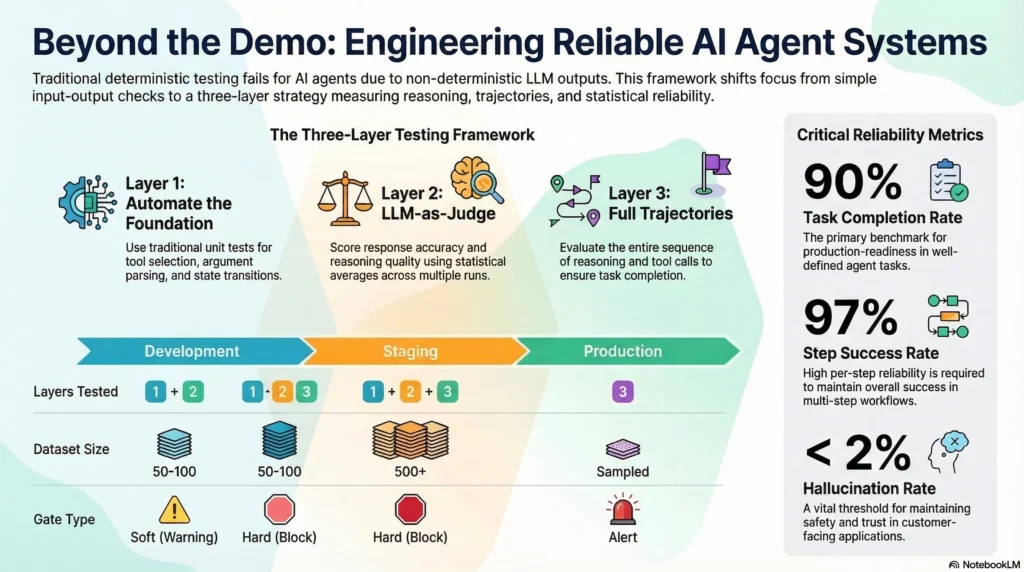

This guide covers a three-layer testing framework built for this reality, the specific metrics that matter for agent reliability, and how to build an evaluation pipeline that catches failures before they reach production. If you are running agents in production, or planning to, this is the testing methodology that turns 36% success into 95%+ task completion.

Interactive Concept Map

Click any node to expand or collapse. Use the controls to zoom, fit to view, or go fullscreen.

Why Traditional Testing Breaks for AI Agents

Traditional unit tests verify that a function produces the same output for the same input. Integration tests verify that components work together correctly. End-to-end tests verify that the full system delivers the expected result.

AI agents break all three assumptions. A unit test for an agent’s reasoning step cannot assert an exact output because the LLM produces different text each time. An integration test for a tool call depends on external API state that changes between runs. An end-to-end test for a multi-step workflow compounds non-determinism at every step.

The result: teams that apply traditional testing approaches to agents either write tests that are so loose they catch nothing, or so strict they produce constant false failures. Both outcomes lead to the same place: teams stop trusting their tests and ship without confidence.

Thirty-two percent of organizations cite quality as the top barrier to deploying agents in production, according to LangChain’s State of AI Agents report. The problem is not that teams do not care about quality. It is that they lack a testing methodology designed for non-deterministic systems.

The Three-Layer Agent Testing Framework

Agent testing requires a layered approach that separates what can be tested deterministically from what requires statistical evaluation. Each layer uses different tools, different assertions, and different pass/fail criteria.

Layer 1: Deterministic Logic Tests

Not everything in an agent system is non-deterministic. Tool call routing, argument parsing, response formatting, state machine transitions, and configuration logic are all deterministic. These components should be tested with traditional unit tests using exact assertions.

What to test:

– Tool selection logic (given this context, does the agent call the right tool?)

– Argument construction (does the tool call include the correct parameters?)

– Response parsing (does the agent correctly extract structured data from tool responses?)

– State transitions (does the agent move to the correct state after each action?)

– Input validation (does the agent reject malformed inputs before processing?)

How to test: Standard unit test frameworks. Assert exact outputs. Run on every commit. These tests should be fast, cheap, and comprehensive. If a deterministic component fails, the test should catch it immediately.

This layer covers roughly 30-40% of your agent’s behavior. It is the foundation that everything else builds on.

Layer 2: LLM Output Quality Evaluation

The second layer evaluates the quality of LLM-generated outputs using evaluation frameworks that measure faithfulness, relevance, coherence, and hallucination. These tests call a judge model, which means they are slower, more expensive, and themselves non-deterministic.

What to evaluate:

– Response accuracy (does the answer match the ground truth?)

– Reasoning quality (does the chain of thought follow logical steps?)

– Hallucination detection (does the response contain claims not supported by the provided context?)

– Instruction adherence (does the output follow the formatting and constraint requirements?)

– Tool use appropriateness (did the agent choose to use tools when it should have, and avoid them when it should not?)

How to evaluate: Use LLM-as-judge methodology. Provide the judge model with the expected output from your golden dataset and ask it to score similarity on a 0-1 scale. Average scores across 3+ runs to absorb non-deterministic variance.

Pass/fail thresholds: Adopt soft failure thresholds instead of binary pass/fail.

| Score Range | Classification | Action |

|---|---|---|

| 0.8 – 1.0 | Pass | No action needed |

| 0.5 – 0.8 | Soft failure | Review and investigate |

| 0.0 – 0.5 | Hard failure | Block deployment |

This layer catches quality degradation that deterministic tests miss entirely. A code change that technically produces valid outputs but reduces reasoning quality from 0.85 to 0.55 should block deployment, and only statistical evaluation detects this.

Layer 3: End-to-End Trajectory Evaluation

The third layer evaluates complete agent trajectories: the full sequence of reasoning, tool calls, and decisions that an agent makes to complete a task. This is where you test the agent as a system, not just its individual components.

What to evaluate:

– Task completion rate (did the agent achieve the goal?)

– Step efficiency (how many steps did it take compared to the optimal path?)

– Error recovery (when a tool call failed, did the agent recover gracefully?)

– Escalation accuracy (did the agent escalate to a human when it should have?)

– Cost efficiency (how many tokens did the task consume?)

How to evaluate: Build scenario-based evaluation suites that simulate multi-turn conversations and complex tool-use sequences. Run each scenario multiple times and measure statistical outcomes.

The key insight from production teams: test trajectories, not just outputs. When you capture how an agent thinks, acts, fails, and recovers, testing becomes far more structured than testing final outputs alone.

Building the Evaluation Pipeline

An evaluation pipeline is not a single test suite. It is infrastructure that runs continuously across development, staging, and production.

Development: Fast Feedback

In development, run Layer 1 tests on every commit and Layer 2 evaluations on every pull request. Keep golden datasets small (50-100 examples) and focused on the highest-risk scenarios. The goal is fast feedback: a developer should know within minutes if their change breaks agent behavior.

Staging: Comprehensive Evaluation

In staging, run all three layers against your full golden dataset (500+ examples). Include adversarial test cases: malformed inputs, conflicting instructions, tools that return errors, and scenarios designed to trigger hallucination. This gate should block any deployment that drops below your quality thresholds.

Production: Continuous Monitoring

In production, run Layer 3 evaluations continuously on real traffic. Sample a percentage of agent interactions, evaluate them asynchronously, and alert when quality metrics drift below thresholds. This catches degradation caused by changes in external APIs, model updates, or distribution shifts in user inputs.

| Pipeline Stage | Layers | Dataset Size | Frequency | Gate Type |

|---|---|---|---|---|

| Development | 1 + 2 | 50-100 | Every commit/PR | Soft (warn) |

| Staging | 1 + 2 + 3 | 500+ | Pre-deployment | Hard (block) |

| Production | 3 | Sampled traffic | Continuous | Alert |

Golden Dataset Design

Your golden dataset is the backbone of your evaluation pipeline. Design it with these principles:

- Cover the distribution. Include common cases (80%), edge cases (15%), and adversarial cases (5%).

- Include expected trajectories. Not just the final answer, but the expected reasoning path and tool call sequence.

- Version everything. Golden datasets evolve. Track changes the same way you track code changes.

- Test tool failures. Include scenarios where tools return errors, timeouts, and malformed responses. An agent that only works when every tool call succeeds is not production-ready.

Six Metrics That Matter for Agent Reliability

Measuring the right things is as important as testing the right things. These six metrics, tracked continuously, give you a complete picture of agent reliability.

1. Task Completion Rate

The percentage of tasks the agent completes successfully without human intervention. This is your primary reliability metric. Track it overall and segmented by task type, complexity, and user segment.

Target: 90%+ for production readiness on well-defined tasks.

2. Step Success Rate

The percentage of individual agent steps that succeed. When task completion drops, step success rate tells you which part of the pipeline is degrading.

Target: 97%+ per step to maintain 90%+ task completion over multi-step workflows.

3. Tool Call Failure Rate

The percentage of tool calls that fail due to errors, timeouts, or malformed responses. Industry data indicates 3-15% baseline failure rates for tool calls. Your harness infrastructure should include retry logic and validation to bring effective failure rates below 1%.

Target: < 1% effective failure rate after retries and fallbacks.

4. Hallucination Rate

The percentage of responses containing claims not supported by the provided context. Measure this using LLM-as-judge evaluation on sampled production traffic.

Target: < 2% for customer-facing applications.

5. Mean Tokens Per Task

The average token consumption per completed task. This is both a cost metric and a quality signal. Increasing token consumption often indicates the agent is taking more steps, retrying more often, or generating unnecessarily verbose reasoning.

Target: Stable or decreasing over time. Sudden increases warrant investigation.

6. Escalation Rate

The percentage of tasks escalated to human operators. Too high means the agent is not capable enough. Too low in complex domains means the agent is overconfident and completing tasks it should escalate.

Target: Depends on domain. Establish a baseline and monitor for drift in either direction.

Testing as Harness Engineering

AI agent testing is not a standalone practice. It is a core component of harness engineering, the discipline of building the infrastructure that makes agents reliable in production.

The evaluation pipeline sits alongside verification loops, cost controls, observability, and graceful degradation in the agent harness. Together, these components form the operational structure that transforms unreliable LLM outputs into reliable business processes.

Teams that treat testing as separate from infrastructure consistently underinvest. They build the agent, add some tests, ship to production, and scramble when things break. Teams that build testing into the harness from day one catch 60% of production failures before deployment (Stanford’s research) and identify 85% of critical issues through simulation (Google’s data).

The production deployment guide covers how evaluation pipelines integrate with the broader deployment workflow, including pre-deployment gates, canary deployments, and post-deployment monitoring.

Common Testing Mistakes

Testing Outputs Instead of Trajectories

The most common mistake. Teams test whether the agent produced the right final answer without examining how it got there. An agent that produces the correct answer through a flawed reasoning path will fail on the next similar but slightly different task. Test the trajectory: the reasoning, the tool calls, the decision points.

Binary Pass/Fail on Non-Deterministic Outputs

Applying exact match assertions to LLM outputs produces constant false failures. Teams disable the tests. Use soft failure thresholds and statistical evaluation instead.

Testing Only the Happy Path

Agents in production encounter malformed inputs, failing tools, conflicting instructions, and adversarial users. If your test suite only includes clean, well-structured scenarios, it tells you nothing about production reliability. Dedicate 20% of your test cases to failure scenarios.

Evaluating Too Infrequently

Running evaluations only before deployment misses production drift. External APIs change, model providers update their models, and user behavior shifts. Continuous evaluation on production traffic is the only way to catch these changes.

Ignoring Cost in Evaluation

An agent that completes tasks perfectly but consumes 10x the expected tokens is not passing your quality bar. Include token consumption in your evaluation criteria. Runaway costs are a reliability failure, not just a budget concern.

Frequently Asked Questions

How do I test AI agents when outputs are non-deterministic?

Use a three-layer approach: deterministic tests for logic components, LLM-as-judge evaluation with soft failure thresholds for output quality, and trajectory-based evaluation for end-to-end behavior. Average scores across 3+ runs per test case to absorb variance.

What tools should I use for AI agent evaluation?

The leading platforms in 2026 include Maxim AI for end-to-end simulation and evaluation, Langfuse for open-source tracing, LangSmith for LangChain-native testing, Arize for observability with drift detection, and Braintrust for collaborative evaluation. Choose based on your framework and existing infrastructure.

How large should my golden dataset be?

Start with 50-100 examples for development feedback. Scale to 500+ for staging gates. Include 80% common cases, 15% edge cases, and 5% adversarial cases. Version your datasets and update them as you discover new failure patterns in production.

What is an acceptable task completion rate for production agents?

For well-defined, bounded tasks: 90%+ task completion rate. For complex, multi-step workflows: 85%+. For creative or open-ended tasks: establish baselines and measure improvement over time rather than targeting a specific threshold.

How do I test tool call reliability?

Mock external tools in your test environment to simulate success, failure, timeouts, and malformed responses. Test that your agent handles each scenario correctly: retrying on transient failures, falling back on persistent failures, and validating response schemas before processing. Then monitor real tool call failure rates in production.

Building Confidence in Non-Deterministic Systems

AI agent testing is a different discipline from traditional software testing. The non-determinism is not a bug to eliminate. It is a fundamental characteristic to manage through statistical evaluation, layered testing, and continuous monitoring.

The three-layer framework, deterministic logic, statistical quality evaluation, and trajectory-based end-to-end testing, provides the structure needed to build confidence in systems that never produce the same output twice.

Three steps to implement this week:

- Identify the deterministic components in your agent system and add traditional unit tests for them. This is the fastest path to testing coverage.

- Build a golden dataset of 50 examples covering your agent’s most common and most critical workflows. Include 10 failure scenarios.

- Subscribe to the newsletter for weekly production patterns, including evaluation pipeline architectures and testing strategies from teams running agents at scale.

The math does not lie: 95% reliability per step yields 36% success over 20 steps. Testing is how you close that gap.

1 thought on “AI Agent Testing: How to Build Reliable, Production-Ready Agent Systems”