The AI agent ecosystem continues its rapid evolution, with new frameworks, security innovations, and model capabilities emerging at an accelerating pace. This week brings critical insights into production-grade agent deployment, comprehensive framework analysis, and significant advances in LLM context windows that are reshaping how developers build intelligent systems. Here’s what you need to know to stay ahead in the agent engineering landscape.

1. LangChain Remains the Foundation of AI Agent Development

LangChain’s continued dominance in the open-source agent engineering space reflects its maturity and adoption across enterprises and startups alike. The framework’s ecosystem of integrations, abstractions, and tools has made it the de facto standard for developers building agentic systems, particularly those handling complex, multi-step workflows.

The prominence of LangChain underscores a critical reality: successful agent development requires robust, battle-tested abstractions that bridge the gap between raw LLM capabilities and production-grade applications. As the agent landscape fragments into specialized tools (LangGraph for state management, LangServe for deployment), LangChain’s core position as the foundational layer becomes increasingly valuable. For developers entering agent engineering, starting with LangChain remains the path of least resistance, though understanding its architecture is essential for building scalable agentic systems.

2. AI Agents Benchmarked on Real Lending Workflows

A critical case study from the community benchmarks AI agents against real-world lending workflows, testing their performance on tasks like document analysis, risk assessment, and decision automation. This research reveals both the capabilities and limitations of current agent implementations when applied to high-stakes financial processes.

This work is particularly significant because lending represents a regulated domain where agent failures carry material consequences—both financial and legal. Early results suggest that agents excel at initial document triage and straightforward decision paths but struggle with edge cases and novel scenarios requiring human judgment. The findings emphasize that successful agent deployment in financial services requires robust guardrails, explainability mechanisms, and human-in-the-loop verification. For enterprises considering agents in regulated industries, this benchmark provides a reality check: agents are powerful assistants, but not yet autonomous decision-makers in high-stakes domains.

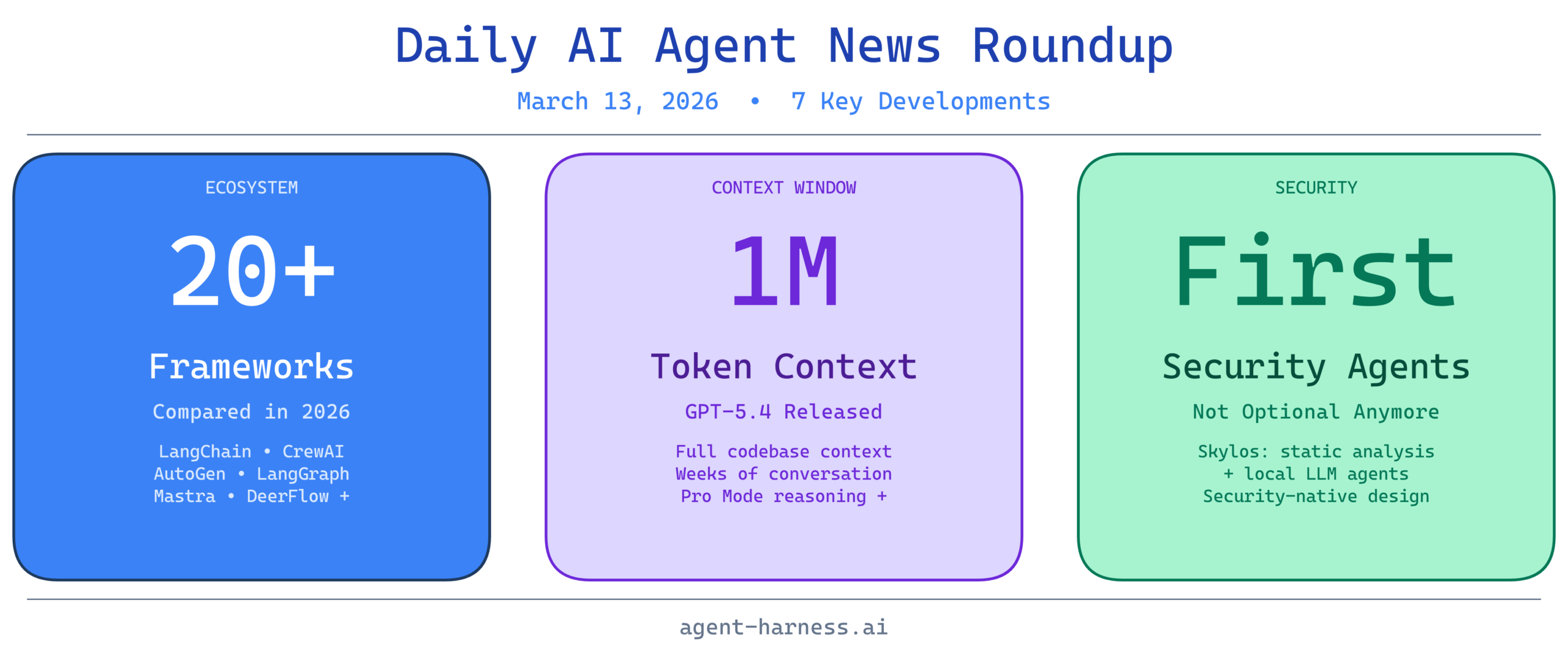

3. Skylos: Security-First AI Agent Development

Skylos introduces a distinctive approach to agent security by combining static analysis with local LLM agents, addressing growing concerns about vulnerabilities in agentic systems. Rather than treating security as an afterthought, Skylos embeds it into the agent development lifecycle through automated code analysis and local inference.

As AI agents gain access to more sensitive systems and data, security becomes paramount. Skylos’s approach of leveraging smaller, local LLMs for security analysis offers a compelling middle ground between heavyweight static analysis tools and the risk of sending code to external APIs. This tool signals an important trend: the emergence of security-native frameworks designed specifically for agent development. Organizations deploying agents with access to critical systems should evaluate tools like Skylos as part of their security infrastructure, not as optional add-ons.

4. Comprehensive 2026 AI Agent Framework Comparison

A comprehensive comparison analyzing every major AI agent framework in 2026—including LangChain, LangGraph, CrewAI, AutoGen, Mastra, DeerFlow, and over 20 others—provides clarity on the rapidly fragmenting agent ecosystem. This analysis breaks down architecture, use cases, strengths, and trade-offs across the full spectrum of available tools.

The sheer number of frameworks (20+) reflects both the immaturity and the explosive growth of agent engineering. Rather than indicating a winner, this fragmentation suggests that different frameworks excel in different contexts: CrewAI for multi-agent orchestration, LangGraph for complex state management, AutoGen for research-oriented workflows. The key insight for developers is that framework selection should be driven by specific architectural needs rather than hype. A lending agent and a research assistant have vastly different requirements, and the best framework for one may be suboptimal for the other. This comparison provides the foundation for making informed decisions in what has become a crowded, confusing landscape.

5. The Rise of the Deep Agent: Engineering Reliability at Scale

This analysis distinguishes between simple LLM workflows and deep agents—sophisticated systems designed for reliability, persistence, and complex task execution. The video explores what separates a basic prompt-response loop from an agent capable of real-world work: memory management, error recovery, tool integration, and structured reasoning.

The distinction between “agent-like” and “truly agentic” systems is crucial as hype around AI agents peaks. Many deployed systems lack the sophistication required for genuine autonomy—they’re essentially wrapped LLM calls without proper state management or recovery mechanisms. Understanding this distinction helps teams assess whether their current implementations are genuinely agentic or merely simulating agency. For builders, this signals that deep agents require significant engineering investment beyond model selection. The complexity compounds when agents need to operate reliably over days, weeks, or months of continuous execution.

6. OpenAI’s Major Week: GPT-5.4 with 1M Context Windows

OpenAI released GPT-5.4 this week, introducing a dramatic expansion to context windows—now reaching 1 million tokens—and introducing Pro Mode for enhanced reasoning capabilities. This represents a generational leap in the types of problems models can address and the complexity of information they can process simultaneously.

The 1-million-token context window fundamentally changes what agents can do. Systems can now maintain richer memory, process entire codebases, handle weeks of conversation history, and reason over massive document sets without summarization. For agent engineering, this means building agents that maintain deeper context awareness without the previous requirement for aggressive context culling strategies. Pro Mode adds another dimension: the ability to trade computational resources for deeper reasoning, enabling agents to tackle genuinely complex problems. However, developers should recognize that larger context windows don’t automatically mean better agents—proper information retrieval, ranking, and injection strategies remain critical.

7. The Weekly AI Breakthroughs: Multiple Model Updates Reshape the Landscape

Beyond OpenAI’s announcements, this week featured multiple AI updates across the industry, from Claude improvements to open-source model advances. The cumulative effect of these releases is raising the baseline capabilities available to agent developers, making sophisticated agentic systems more accessible than ever.

The acceleration in model updates creates both opportunity and challenge for agent teams. Opportunities lie in leveraging new capabilities—expanded context, improved reasoning, better tool use—to build more capable agents. Challenges emerge in managing the rapid change: should you upgrade immediately? Which models work best for your specific agent tasks? How do you ensure stability while benefiting from innovation? The answer often lies in abstraction layers: using frameworks like LangChain to decouple agent logic from model selection, allowing flexibility without complete rewrites.

What This Means for Agent Engineering

This week’s developments coalesce around several critical themes:

The agent ecosystem is maturing. With 20+ frameworks available, clear security tooling emerging, and production benchmarks available, agent engineering is transitioning from experimental research to engineering discipline. Teams can now make informed decisions based on real-world performance data and established best practices.

Context and reasoning are becoming differentiators. Million-token context windows and enhanced reasoning modes don’t automatically create better agents, but they do enable new architectures previously impossible to build. The agencies that win will be those that understand how to leverage these expanded capabilities effectively.

Security is no longer optional. As agents gain access to sensitive systems and data, frameworks like Skylos represent the beginning of security-native agent development. Organizations deploying agents need to view security as a first-class concern, not an afterthought.

The framework landscape will continue fragmenting. No single framework will dominate all use cases. Instead, developers will need to match framework selection to specific architectural requirements. Understanding the trade-offs between the top options is essential.

For builders in the agent space, this is an exciting inflection point. The raw capabilities are reaching maturity, the tooling ecosystem is crystallizing, and real-world applications are demonstrating both promise and limitations. The next phase of agent engineering won’t be about building more capable models—it will be about building more reliable, secure, and effective systems with the tools now available.