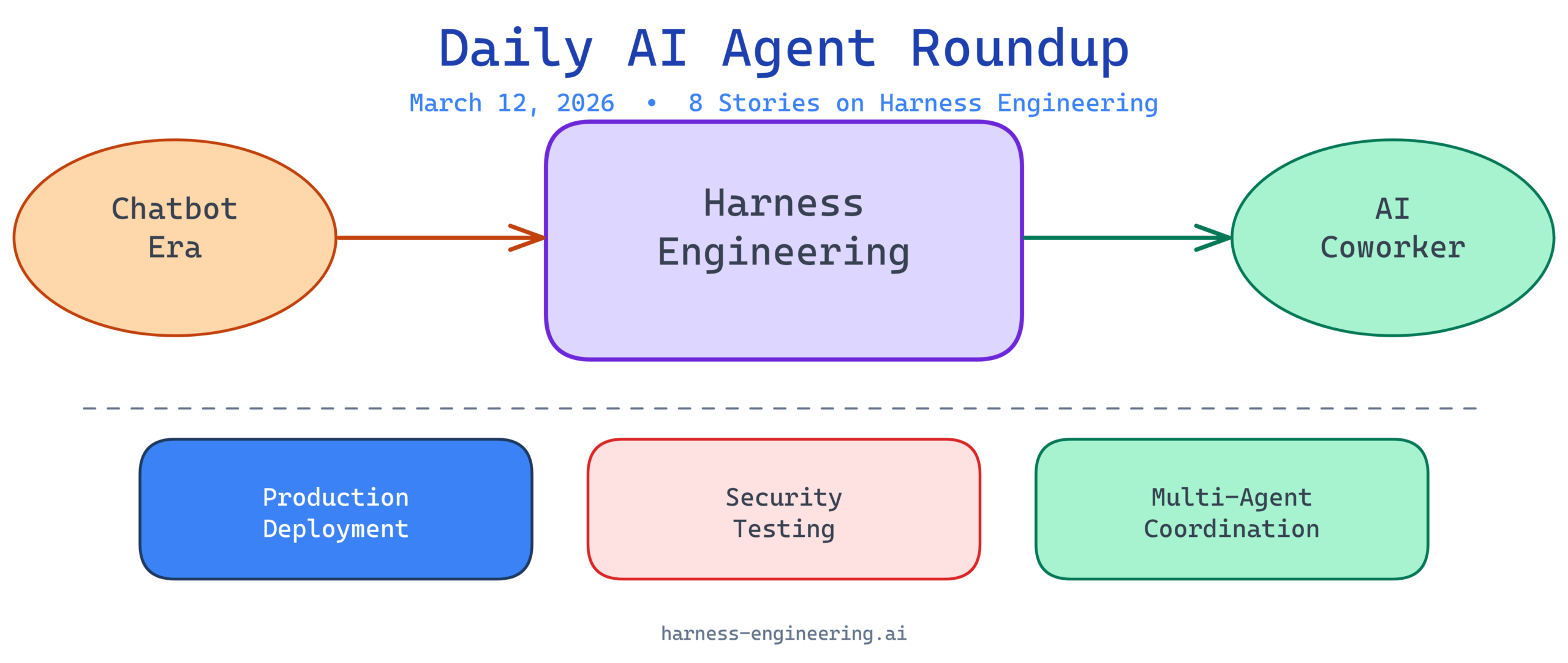

The AI agent landscape continues to mature rapidly, with production deployments, security best practices, and enterprise integration taking center stage. Today’s news cycle reveals critical insights for teams building and deploying AI agents at scale. Here’s what you need to know.

1. Lessons From Building and Deploying AI Agents to Production

Drawing from real-world experiences, industry practitioners are sharing practical lessons learned when bringing AI agents from prototype to production. This content focuses on the operational, technical, and organizational challenges that emerge when agents move beyond proof-of-concept stages. The emphasis is on patterns that actually work in production environments where reliability, cost, and user experience matter.

Analysis: The shift toward production-focused content signals maturity in the AI agent ecosystem. Early-stage hype around agent capabilities is being replaced by pragmatic discussions about deployment realities—monitoring, failure modes, latency requirements, and cost optimization. Teams building agents today need to learn from these production experiences rather than repeating the same mistakes. This is where harness engineering becomes critical: you can’t just build an agent and hope it works at scale; you need deliberate structures, verification systems, and supervision mechanisms in place before launch.

2. Test Your AI Agents Like a Hacker – Automated Prompt Injection Attacks

Security in AI agents is moving from theoretical concerns to practical necessity as agents gain more autonomy and access to sensitive systems. This resource explores how to test agents by simulating prompt injection attacks—the most direct threat vector for manipulating agent behavior. Automated testing frameworks that attempt to break agents through adversarial prompts are becoming essential parts of QA pipelines.

Analysis: As AI agents transition from passive assistants to active participants in critical workflows, security vulnerabilities become business liabilities. Prompt injection attacks represent a fundamental challenge because they operate at the semantic level—harder to detect than traditional code injection. Organizations deploying agents that interact with databases, user data, or financial systems need robust security testing before production. This highlights why harness engineering isn’t just about controlling agent behavior for performance; it’s about containment and verification. Building agents that can be reliably tested for security vulnerabilities is now table stakes.

3. AI Agents Just Went From Chatbots to Coworkers

Major technology companies are announcing shifts in how AI agents are positioned and deployed within organizations. Rather than answering questions (chatbot paradigm), agents are now being framed as team members with specific responsibilities, workflows, and integration into existing business processes. This represents a fundamental change in how enterprises think about AI adoption and ROI.

Analysis: The terminology shift from “chatbot” to “coworker” isn’t semantic fluff—it signals a real change in expectations and architecture. Coworkers need reliability, specialization, and integration with existing workflows. They need to hand off tasks to other coworkers, maintain context across multiple interactions, and be accountable for outcomes. This elevated expectation means AI agents require more sophisticated harness engineering: explicit skill definitions, clear authorization boundaries, persistent context management, and integration patterns that respect existing business processes. The agents that succeed in this “coworker” era will be those with strong foundational harness engineering.

4. How I Eliminated Context-Switch Fatigue When Working With Multiple AI Agents in Parallel

As teams deploy multiple specialized AI agents to handle different aspects of workflows, managing context across parallel agent operations becomes a practical challenge. This discussion from the community explores techniques for maintaining coherent context, avoiding redundant work, and enabling agents to work cooperatively without cognitive overhead for human supervisors.

Analysis: The emergence of “parallel agent” problems reflects real-world complexity: organizations don’t want just one general agent, they want specialized agents for different domains (data analysis, code generation, customer service, etc.). But coordinating multiple agents introduces context management challenges that feel similar to context-switching fatigue in human teams. The solutions discussed likely center on shared context repositories, event-driven coordination, and clear task boundaries. This is core harness engineering work—designing the infrastructure and supervision mechanisms that allow multiple agents to work together without creating confusion or redundant efforts. Teams building multi-agent systems need to invest in these coordination patterns early.

5. Microsoft Just Launched an AI That Does Your Office Work for You — Built on Anthropic’s Claude

Microsoft’s launch of Copilot Cowork, powered by Claude, demonstrates enterprise adoption of AI agents for office productivity tasks. This is significant because it represents a major technology company betting on AI agents as productivity multipliers within existing workflows, rather than as separate tools or chat interfaces.

Analysis: Microsoft’s choice to build on Anthropic’s Claude reflects confidence in Claude’s reasoning capabilities and alignment properties—crucial for agents handling sensitive office information. The branding as “Cowork” reinforces the coworker positioning trend. For teams evaluating AI agents, this launch signals that production-grade AI agents are becoming commercially viable and that major enterprises are seeing ROI. However, the success of Copilot Cowork will depend heavily on harness engineering: how well Microsoft has constrained agent capabilities to safe office tasks, how they’ve structured permissions and access controls, how they’ve handled context management across sessions, and how they verify that agents don’t inadvertently expose sensitive information. These behind-the-scenes harness engineering decisions determine whether the agent is genuinely useful or creates more problems than it solves.

6. Building AI Coding Agents for the Terminal: Scaffolding, Harness, Context Engineering

This deep dive addresses the unique challenges of building agents that operate in terminal environments where stakes are higher (code changes directly affect systems) and the interface is less forgiving than typical chat. The focus on scaffolding, harness engineering, and context engineering reveals the structural thinking required for agents operating in power-user environments.

Analysis: Terminal-based coding agents represent one of the highest-stakes agent use cases: they can write, compile, and execute code that changes production systems. The explicit focus on “harness,” “scaffolding,” and “context engineering” indicates that effective coding agents aren’t just smart language models—they require extensive infrastructure for safe operation. Scaffolding refers to the structured templates and patterns that guide agent behavior. Context engineering means carefully curating what information the agent sees about the codebase, available tools, and system state. Harness engineering means the control and verification mechanisms that prevent agents from causing damage. For teams building agents in high-stakes domains, this content illustrates why the plumbing matters as much as the neural network.

7. Harness Engineering: Supervising AI Through Precision and Verification

As AI systems grow more complex and autonomous, supervision itself becomes a specialized discipline. This content explores methodologies for overseeing AI agent behavior through precise specifications and continuous verification rather than through rigid rule-based constraints that limit agent utility.

Analysis: The framing of harness engineering as a supervision discipline is crucial. It moves beyond “how do we prevent bad things from happening” to “how do we ensure good things are happening the way we intended.” Precision means defining what success looks like for an agent task in measurable, verifiable terms. Verification means building systems that continuously check whether the agent’s outputs match those specifications. This is harder than traditional software testing because AI outputs are probabilistic and context-dependent, but it’s necessary for trustworthy agents. Organizations deploying agents need to build verification into their deployment pipelines, not treat it as a post-deployment concern.

8. AI Agents: Skill & Harness Engineering Secrets REVEALED! #shorts

This short-form content highlights the relationship between skill engineering (defining and enabling agent capabilities) and harness engineering (controlling how those capabilities are exercised). The format suggests this topic is becoming mainstream enough for accessible, condensed explanations.

Analysis: The pairing of “skill” and “harness” engineering is the key insight here. Skill engineering is about making agents more capable: better tools, broader knowledge, stronger reasoning. Harness engineering is about making agents safer and more controlled: clearer boundaries, stronger verification, better oversight. Both are necessary and neither is sufficient alone. An agent with strong skills but weak harness will fail unpredictably at scale. An agent with strong harness but limited skills becomes a bottleneck. The best AI systems require investment in both dimensions. As AI agent deployment becomes mainstream, this dual-focus approach will distinguish successful systems from problematic ones.

Key Takeaway

The AI agent ecosystem is entering a production maturity phase where technical excellence moves beyond model capabilities to encompass deployment infrastructure, security testing, multi-agent coordination, and rigorous supervision mechanisms. The repeated emphasis on harness engineering across today’s news cycle reflects a community-wide recognition that how you deploy agents matters as much as what model you use. Teams successfully navigating this phase will be those that invest in scaffolding, context management, verification systems, and clear supervision structures. The agents that become genuine coworkers—reliable, secure, and integrated—will be those with the strongest harness engineering foundations.

Stay tuned for more updates as the agent engineering landscape continues to evolve.

Published: March 12, 2026 | Site: Harness Engineering AI