The AI agent ecosystem continues to accelerate at a remarkable pace. Today’s roundup highlights critical developments in agent frameworks, benchmark studies validating real-world applications, security innovations, and breakthrough model releases that are reshaping how developers build and deploy autonomous systems.

1. LangChain Remains the Cornerstone of Agent Engineering

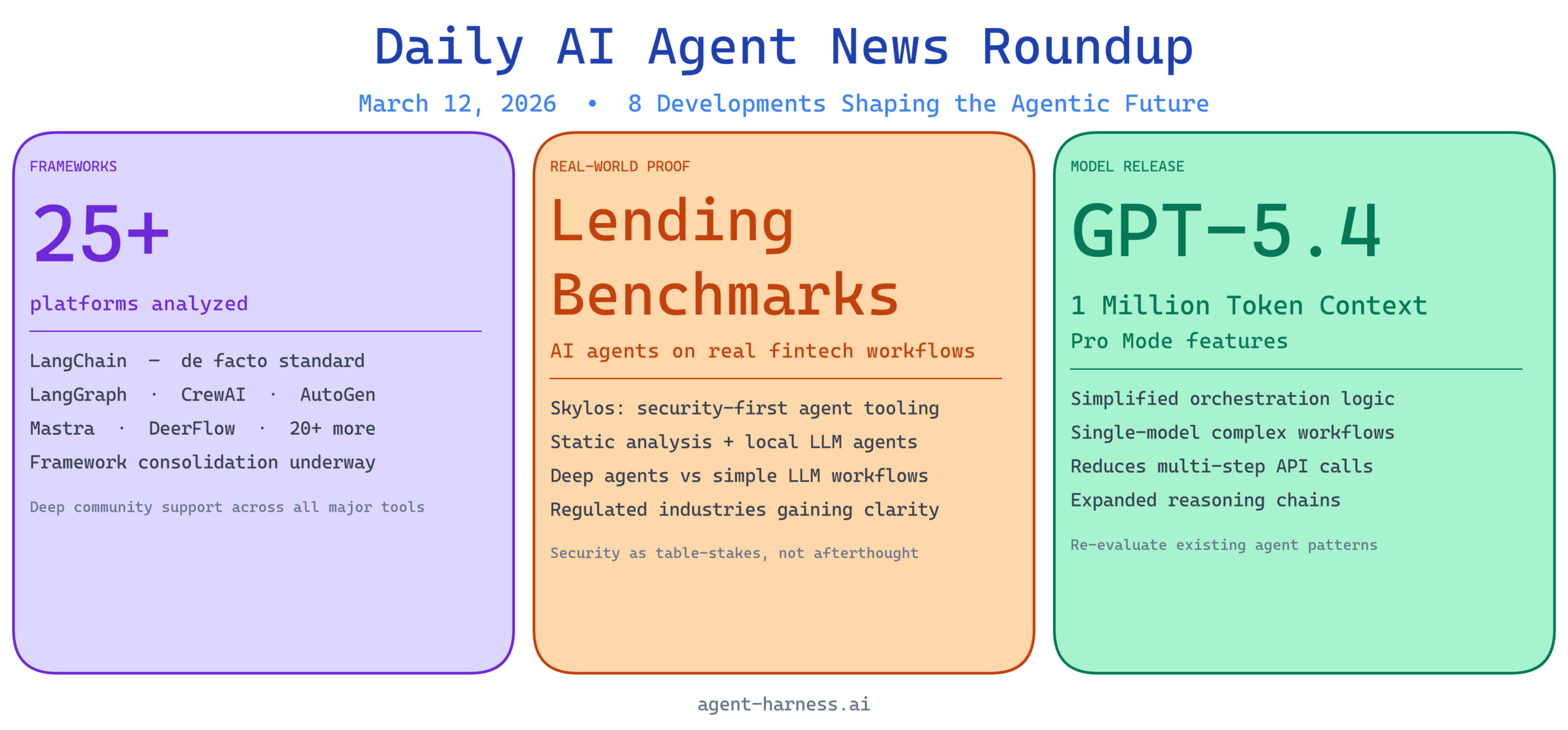

LangChain’s continued prominence in the agent development landscape underscores its critical role in the evolution of AI agent engineering. The framework’s flexibility and extensive ecosystem have made it the de facto standard for developers building sophisticated agent systems, from simple task automation to complex multi-step workflows.

Analysis: LangChain’s dominance reflects both its maturity and the broader challenge of agent fragmentation in the developer landscape. As more frameworks emerge competing for mindshare, LangChain’s staying power comes from its broad adoption, extensive documentation, and community contributions. For teams standardizing on agent infrastructure, LangChain represents a safe bet with deep community support and regular updates. However, developers should continue evaluating specialized frameworks like LangGraph and AutoGen to determine if more targeted solutions better fit their specific use cases.

2. AI Agents Successfully Benchmarked on Real Lending Workflows

A significant case study demonstrates that AI agents can effectively handle authentic financial lending workflows, with detailed performance metrics now available for the community. This benchmark bridges a critical gap: understanding how agents perform in regulated, high-stakes environments where accuracy and compliance matter enormously.

Analysis: This development is transformative for the fintech and banking sectors, traditionally cautious about AI adoption due to regulatory constraints and risk exposure. The availability of real-world benchmarks provides the empirical evidence that stakeholders need to justify AI agent investments in lending operations. As regulatory frameworks continue maturing around AI in finance, benchmark studies like this become essential reference points for compliance and risk assessment. Organizations evaluating agent deployment in lending should review this case study as a starting point for their own pilot programs.

3. Skylos Brings Security-First Approach to Agent Development

Skylos introduces a novel security paradigm by combining static analysis with local LLM agents, addressing growing concerns about safety and vulnerability management in AI agent systems. This tool represents a meaningful step toward making agent development more secure by default rather than treating security as an afterthought.

Analysis: As AI agents gain more autonomy and broader system access, security vulnerabilities become existential risks. Skylos’s approach of embedding security checks directly into the agent development workflow is proactive rather than reactive. By leveraging local LLM agents for analysis, it maintains privacy and reduces external dependencies while improving detection accuracy. This tool should be on the radar of organizations building agents with sensitive data access or critical system integration. The emphasis on local LLM agents also reflects a broader industry shift toward reducing reliance on external API calls for security-sensitive operations.

4. Comprehensive 2026 Framework Comparison: 25+ AI Agent Platforms Analyzed

The community has aggregated a detailed comparison covering LangChain, LangGraph, CrewAI, AutoGen, Mastra, DeerFlow, and over 20 additional frameworks, providing clarity on an increasingly fragmented landscape. This comparison addresses a critical pain point: selecting the right framework when dozens of viable options exist.

Analysis: Framework proliferation benefits the ecosystem through competition and innovation but creates decision paralysis for teams starting new projects. This comprehensive comparison is invaluable for architects and decision-makers evaluating their technology stack. Key considerations when reviewing such comparisons: your team’s existing expertise, the specific agent patterns you’ll implement (agentic loops, hierarchical agents, tool-calling workflows), and long-term vendor and community stability. Rather than seeking a universally optimal choice, teams should focus on frameworks that align with their operational model and existing toolchain. The fact that this comparison even exists reflects how mature the agent landscape has become in just the past 12 months.

5. Understanding Deep Agents vs. Simple LLM Workflows

“The Rise of the Deep Agent” explores the architectural and operational distinctions between basic LLM workflows and sophisticated, production-grade AI agents. This distinction is increasingly important as organizations move beyond proof-of-concepts into reliable, governed deployments.

Analysis: The gap between an LLM in a chat interface and a true agent system represents one of the most critical conceptual hurdles in AI adoption. Reliable agents require planning mechanisms, reflection, error handling, memory management, and tool orchestration—complexity that simple LLM calls don’t address. Understanding this distinction prevents costly project failures where organizations assume “ChatGPT-like” capabilities will suffice for autonomous business operations. For engineering leaders and architects, this video provides essential framing on when to invest in proper agent infrastructure versus when lightweight LLM integration will suffice. The emphasis on reliability is particularly crucial for regulated industries and mission-critical operations.

6. Weekly AI Updates Include Major Model Releases

OpenAI’s GPT 5.4 release featuring expanded context windows represents a significant capability jump for agents that need to process and maintain extended conversations and complex problem domains. This week’s broader AI updates signal rapid advancement across multiple domains.

Analysis: Expanded context windows directly impact agent capabilities, enabling more sophisticated reasoning chains and longer-term memory management. Agents with million-token contexts can process entire codebases, comprehensive documentation, or extended conversation histories without truncation. This capability amplifies agent effectiveness in complex domains like software engineering, legal document analysis, and research synthesis. However, expanded context comes with latency and cost tradeoffs that require careful optimization. Teams should evaluate whether their use cases genuinely benefit from extended context or whether intelligent chunking and retrieval strategies provide better performance characteristics.

7. GPT-5.4 Released: 1 Million Token Context + Pro Mode Features

OpenAI’s latest model release introduces Pro Mode—additional capabilities layered on top of the already-impressive million-token context window. This represents a significant inflection point for both consumer and enterprise agent applications.

Analysis: The combination of massive context windows and Pro Mode features dramatically expands what’s possible within a single model call. For agents building on GPT 5.4, this means reduced need for multiple API calls, simpler orchestration logic, and potentially lower latency. The Pro Mode features likely address common pain points like structured output enforcement, reasoning token control, and advanced planning capabilities. Organizations standardizing on GPT-based agents should carefully evaluate how GPT 5.4 changes their architecture—some existing multi-step agent patterns may collapse into simpler single-model workflows.

8. Latest AI Updates: Rapid Evolution Across the Stack

This week’s roundup of “crazy AI updates” highlights the relentless pace of model advancement, new capabilities, and framework innovations. The velocity of change makes staying current a core responsibility for AI practitioners.

Analysis: The frequency and significance of weekly AI updates creates both opportunity and challenge. Each breakthrough—whether in model capability, framework maturity, or developer tooling—potentially impacts decisions made just weeks earlier. Rather than chasing every new release, teams should establish a cadence for evaluating major developments and maintaining a prioritized watchlist of emerging capabilities. This week’s updates underscore why continuous learning and experimentation remain essential in AI agent development. Organizations should allocate dedicated engineering time for exploring and proof-testing new capabilities rather than waiting for maturity signals that may never come in a rapidly evolving space.

Key Takeaways

The AI agent landscape is maturing rapidly across multiple dimensions. Framework consolidation is occurring even as new platforms emerge, benchmarking is validating real-world deployment, security is becoming table-stakes rather than afterthought, and foundational models are advancing faster than most organizations can integrate.

For decision-makers: Focus on framework selection and governance principles rather than chasing every new tool. Establish internal standards for agent development, security validation, and performance measurement.

For engineers: Invest in understanding the distinction between simple LLM integration and sophisticated agent systems. Familiarize yourself with at least 2-3 frameworks to understand the design tradeoffs different platforms make.

For organizations: The convergence of refined frameworks, validated benchmarks, and powerful foundational models creates a genuine inflection point. Scaling agent pilots into production systems is now feasible; the question is whether your operational and governance processes can keep pace with the technology.

Last updated: March 12, 2026 | Next roundup: March 13, 2026