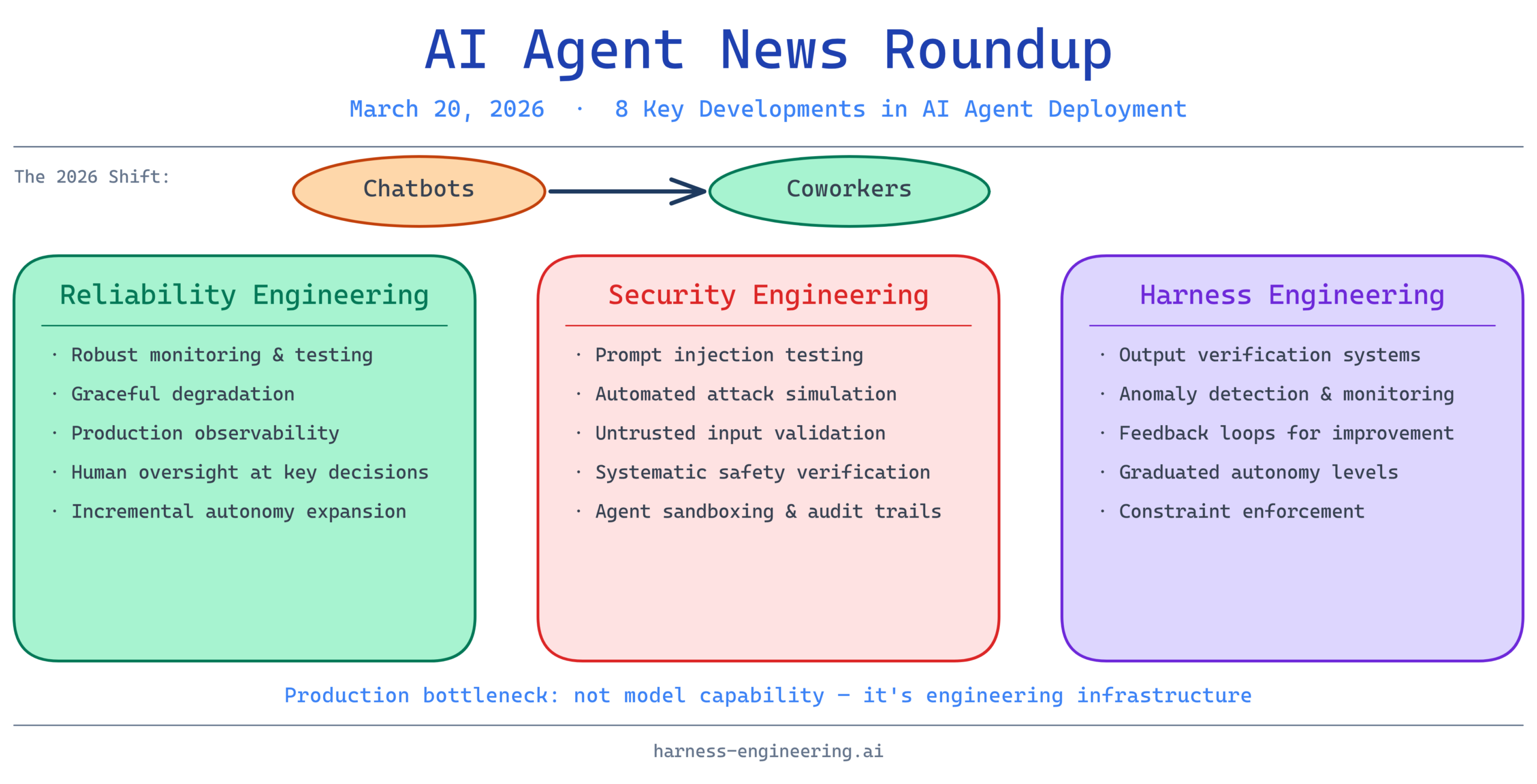

The AI agent landscape continues to evolve rapidly, with the industry shifting from experimental chatbot deployments to production-ready systems integrated into everyday workflows. This week, we’re seeing critical discussions around deployment best practices, security vulnerabilities, and the emerging concept of “harness engineering”—the specialized discipline of building reliable oversight mechanisms for autonomous AI systems. Below are the key stories shaping AI agent development in 2026.

1. Lessons From Building and Deploying AI Agents to Production

Real-world deployments reveal that production-ready AI agents require far more than powerful underlying models—they demand robust monitoring, graceful fallback mechanisms, and clear ownership boundaries. This presentation synthesizes lessons from teams that have moved beyond proof-of-concepts, highlighting how observability, testing strategies, and feedback loops become critical as agents handle increasingly important tasks.

The gap between what works in a research environment and what succeeds in production remains substantial. Teams deploying agents at scale emphasize the importance of incrementally expanding agent autonomy, maintaining human oversight at critical decision points, and designing systems that degrade gracefully when uncertainty is high.

2. Test Your AI Agents Like a Hacker – Automated Prompt Injection Attacks

Prompt injection attacks represent one of the most underestimated security risks in agent systems, yet they remain difficult to detect and prevent at scale. This session explores automated frameworks for testing agents against injection vulnerabilities—treating security testing with the same rigor that traditional software teams apply to code vulnerabilities.

Understanding these attack vectors is essential as agents increasingly interact with untrusted inputs from end-users, documents, and external APIs. The methodology here shifts the security mindset from “just be careful” to “systematically verify safety properties,” which is fundamental to deploying trustworthy autonomous systems.

3. AI Agents Just Went From Chatbots to Coworkers

Recent announcements from major technology companies signal a fundamental reframing of AI agents—no longer peripheral tools, but integrated participants in knowledge work. This transition reflects maturation in areas like task complexity, context handling, and human-AI collaboration patterns that now enable agents to contribute meaningfully alongside human teams.

The implications are profound for organizations evaluating AI adoption strategies. Agents as “coworkers” requires different thinking about onboarding, error correction, and organizational adaptation than the “assistant” framing of earlier years. This represents a structural shift in how enterprises will design their processes and skill sets.

4. How I Eliminated Context-Switch Fatigue When Working With Multiple AI Agents in Parallel

Managing multiple autonomous agents operating in parallel introduces cognitive and coordination challenges that weren’t present with single-agent systems. This discussion highlights practical patterns for reducing the mental burden—from structured communication protocols to clear responsibility boundaries and asynchronous orchestration.

As organizations deploy multiple specialized agents for different functions, these coordination patterns become increasingly important. The solution set here ranges from simple conventions to more sophisticated orchestration frameworks, but the underlying principle remains: explicit communication and clear ownership reduce uncertainty and human cognitive load.

5. Microsoft Just Launched an AI That Does Your Office Work for You — Built on Anthropic’s Claude

Microsoft’s Copilot Cowork represents a watershed moment in enterprise AI adoption—moving beyond content assistance to autonomous task execution in everyday office applications. The choice to build on Anthropic’s Claude signals trust in the underlying model for handling the complexity and nuance required in office automation.

This deployment pattern validates that enterprises are ready to delegate significant portions of routine work to AI agents, provided there’s clear auditability and the ability to maintain human oversight. The success of this launch will likely accelerate adoption cycles across the enterprise software market.

6. Building AI Coding Agents for the Terminal: Scaffolding, Harness, Context Engineering, and Beyond

Terminal-based coding agents represent a particularly demanding use case—they operate in highly variable environments, interact with complex build systems, and their errors can cascade through entire development pipelines. This presentation explores the specialized engineering required: proper scaffolding to constrain agent actions, harness frameworks to verify outputs, and context engineering techniques to handle the large state spaces typical of development environments.

The terminal environment exemplifies why “harness engineering” is becoming a distinct discipline. Unlike chatbot applications where errors are often recoverable, incorrect code generation can cause production failures. The techniques discussed here—from intelligent output verification to graduated autonomy levels—are foundational for deploying agents in safety-critical domains.

7. Harness Engineering: Supervising AI Through Precision and Verification

Harness engineering encompasses the entire infrastructure for intelligent oversight: verification systems that check outputs against constraints, monitoring that detects anomalous behavior, and feedback mechanisms that improve agent performance over time. This framing positions oversight not as a limitation, but as the active ingredient that transforms raw capability into reliable systems.

The core insight is that sophisticated supervision itself requires engineering discipline. Rather than hoping agents “do the right thing,” harness engineering provides concrete mechanisms to detect failures, constrain actions, and continuously validate correctness. This approach has proven essential in safety-critical applications, from autonomous vehicles to financial trading systems.

8. AI Agents: Skill and Harness Engineering Secrets REVEALED! #shorts

The interplay between skill engineering (the capabilities agents develop) and harness engineering (the oversight mechanisms) determines whether agents become reliable additions to organizations or sources of unexpected failures. This short-form content distills the essential tension: maximizing agent capability without adequate oversight leads to uncontrollable systems, while over-constraining agents reduces their usefulness.

The “secrets” here amount to practical principles: focus harness engineering on high-consequence decisions, allow agent autonomy where the cost of error is low, and treat agent behavior as data that should inform both skill and harness improvements iteratively.

The Bigger Picture: From Autonomy to Accountability

This week’s developments reflect a maturing industry moving beyond “Can we build this?” to “How do we deploy this responsibly?” The consistent theme across these stories is that production-ready AI agents require deliberate engineering in three dimensions:

Reliability Engineering ensures agents work correctly under real-world conditions, with robust testing, monitoring, and graceful degradation when uncertainty is high.

Security Engineering recognizes that autonomous systems interacting with untrusted inputs face novel attack surfaces (particularly prompt injection), requiring systematic vulnerability testing and input validation.

Oversight Engineering (harness engineering) provides the verification, monitoring, and feedback mechanisms that transform raw agent capability into trustworthy systems suitable for high-stakes domains.

Organizations moving agents from proof-of-concept to production should recognize that the bottleneck is no longer model capability—it’s engineering infrastructure for safe, reliable deployment. The good news is that this infrastructure is increasingly well-understood, with patterns emerging across industries from software development to office automation to autonomous systems.

The transition from chatbots to coworkers reflects not just capability improvements, but the maturation of these engineering disciplines. As more organizations deploy agents at scale, those that invest in harness engineering, security testing, and production observability will generate reliable, auditable systems. Those that don’t will face increasing operational friction and trust deficits.

The AI agent era is here—the question now is whether we engineer it responsibly.