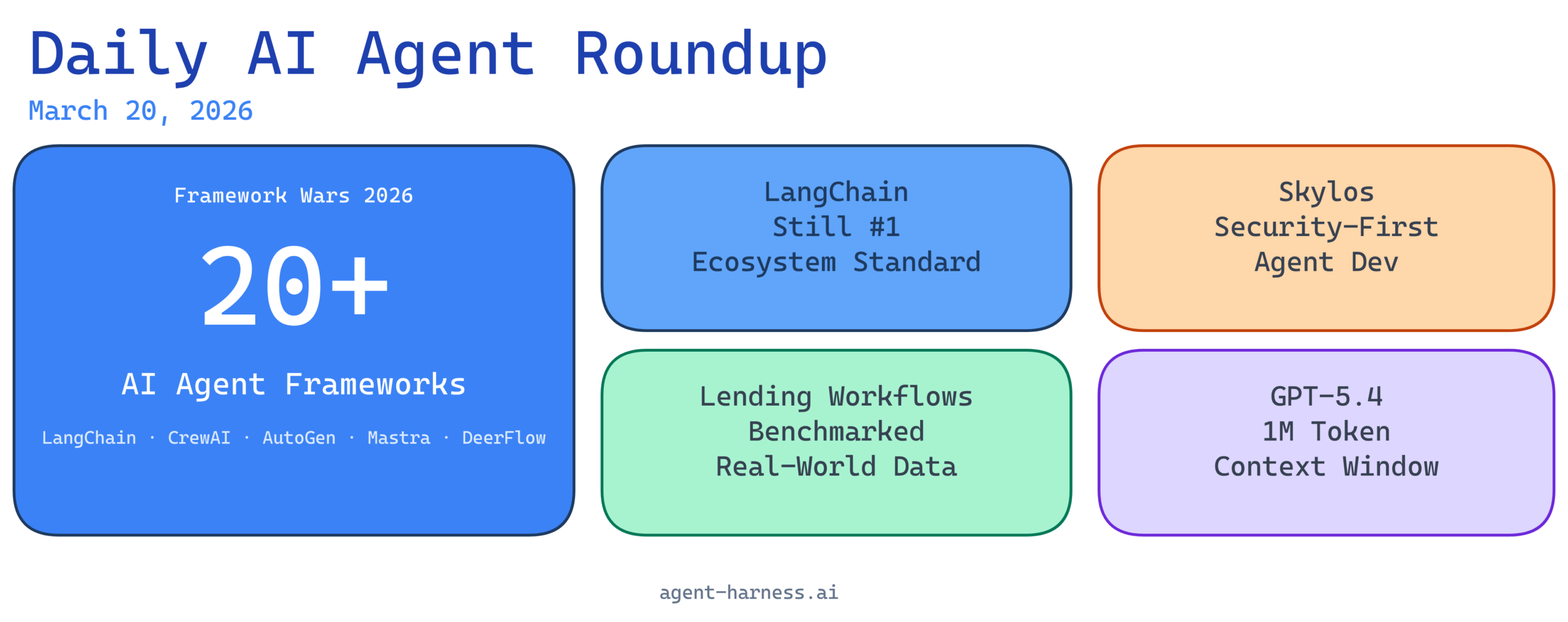

The AI agent ecosystem continues to accelerate at a breakneck pace. This week brings significant developments across agent frameworks, benchmarking studies, security innovations, and major model releases that are reshaping how developers build, deploy, and evaluate intelligent agent systems. From LangChain’s ongoing dominance in the framework wars to practical performance studies in lending workflows, the landscape reflects both the maturation of agent technology and the emergence of specialized tools addressing real-world concerns like security and framework selection.

1. LangChain’s Continued Dominance in Agent Engineering

The langchain-ai/langchain repository continues to be the de facto standard for AI agent development, with its ecosystem expanding to include increasingly sophisticated tools for orchestration, memory management, and integration patterns. The framework’s prominence underscores the importance of developer experience and community support in the competitive landscape of AI agent frameworks.

LangChain’s position as the industry standard reflects more than just early-mover advantage—it demonstrates how comprehensive documentation, active maintenance, and a thriving community ecosystem create moats around development platforms. For teams building production AI agents, LangChain remains the safest architectural choice, though this status quo is being increasingly challenged by specialized alternatives optimized for specific use cases.

2. Benchmarking AI Agents on Real Lending Workflows

A detailed case study on r/aiagents presents comprehensive benchmarking data showing how AI agents perform on real-world lending operations, moving beyond theoretical benchmarks to measure practical effectiveness in financial services. This research addresses a critical gap: while AI agents show promise in controlled environments, understanding their performance on actual workflows with real constraints and data is essential for enterprise adoption.

Financial services represents one of the highest-stakes domains for AI agent deployment, where errors directly translate to revenue loss and regulatory exposure. This benchmarking work provides critical insights for financial institutions evaluating agent-based automation for loan processing, risk assessment, and customer service workflows. The study’s focus on real lending workflows—rather than synthetic datasets—makes it particularly valuable for organizations skeptical of AI agent capabilities in regulated industries.

3. Skylos: Secure AI Agent Development with Static Analysis

Skylos emerges as a significant security-focused tool combining static analysis with local LLM agents, addressing growing concerns about vulnerability detection and secure coding practices in agent systems. As AI agents gain access to more sensitive operations and integrated systems, the need for security-first development tools has become paramount.

This approach represents an important trend toward embedding security into the agent development lifecycle rather than treating it as an afterthought. By combining traditional static analysis—proven effective for identifying common vulnerabilities—with the reasoning capabilities of local LLM agents, Skylos offers a hybrid approach that could significantly reduce security incidents in deployed agent systems. For enterprises operating agents in regulated environments or handling sensitive data, tools like Skylos may become non-negotiable dependencies.

4. Comprehensive 2026 Framework Comparison: 20+ AI Agent Frameworks Analyzed

A thorough comparison of over 20 AI agent frameworks—including LangChain, LangGraph, CrewAI, AutoGen, Mastra, DeerFlow, and others—provides developers with the data needed to make informed architectural decisions in an increasingly fragmented ecosystem. This meta-analysis reveals clear differentiation between frameworks: some optimize for simplicity, others for advanced agentic reasoning, and still others for domain-specific applications.

The proliferation of frameworks indicates both the immaturity of the market and the difficulty of creating a one-size-fits-all solution for agent development. While LangChain remains the most flexible option, specialized frameworks like CrewAI (optimized for multi-agent coordination) and AutoGen (strong on researcher-friendly abstractions) are carving out meaningful niches. For CTOs and technical leaders, this comparison is essential reading when evaluating how to standardize agent development across their organizations.

5. Understanding Deep Agents: Beyond Basic LLM Workflows

A new video deep-dive titled “The Rise of the Deep Agent: What’s Inside Your Coding Agent” explores the critical distinction between simple LLM-as-orchestrator workflows and sophisticated, reliable AI agents with genuine reasoning capabilities. The video argues that as AI coding tools mature, the gap between “chatbot with a hammer” and “true agent” becomes increasingly important for practitioners to understand.

This content speaks to an important inflection point in AI agent maturity. Early-stage agent implementations often amount to prompt chaining with fallback mechanisms—reliable enough for simple tasks but brittle at scale. True deep agents combine reasoning, planning, tool use, and error recovery in ways that fundamentally differ from linear prompt workflows. As enterprises invest in AI agent infrastructure, understanding this distinction prevents costly implementations that fail under real-world complexity.

6. OpenAI Releases GPT-5.4 with 1 Million Token Context Window

OpenAI’s announcement of GPT-5.4 represents a significant leap in model capabilities, featuring an expanded 1 million token context window alongside a new Pro Mode for advanced reasoning. This release directly impacts AI agent performance by enabling agents to maintain coherent context across vastly larger documents, codebases, and conversation histories.

The expanded context window is a game-changer for agent applications that previously required aggressive context management and chunking strategies. Agents can now reference entire repositories, complex business documents, or extended user interaction histories without information loss. The Pro Mode addition suggests OpenAI is moving toward pricing differentiation based on reasoning depth—a signal that enterprise customers are willing to pay for more robust agentic behavior, validating the market opportunity in this space.

7. This Week’s AI Landscape: Key Updates and Implications

Roundup coverage of recent AI developments highlights how quickly the AI landscape is shifting, with multiple releases and announcements reshaping both base model capabilities and the frameworks built atop them. This week’s updates reinforce that staying current with model releases is now a core responsibility for AI engineering teams, as capability improvements directly enable new agent architectures and applications.

The frequency and significance of updates—particularly from OpenAI and other frontier labs—create both opportunities and challenges for teams building on these systems. Teams must balance the temptation to chase the latest capabilities against the operational stability required for production systems. The GPT-5.4 release, combined with improvements in reasoning and context handling, suggests that 2026 will be the year agent systems move from experimental to broadly deployable, assuming teams can manage the continuous evolution of their dependencies.

The Week Ahead: What This Means for AI Agent Developers

The convergence of these developments reveals an ecosystem approaching inflection. LangChain’s continued evolution, practical benchmarking data from real-world workflows, security tooling emergence, and frontier model improvements are all signaling that AI agents are graduating from research artifacts to production infrastructure.

For developers: The framework comparison highlights that specialization is increasingly valuable—choosing the right tool for your specific problem (whether multi-agent coordination, coding assistance, or domain automation) now matters more than defaulting to the most popular option.

For enterprises: The lending workflow benchmarks and security tools suggest that cost-benefit analyses for agent deployment are now possible with real data, making the business case for agent automation increasingly concrete rather than speculative.

For the industry: The million-token context window and Pro Mode pricing indicate that model providers are leaning into agentic applications as a primary use case, with pricing and capability improvements specifically optimized for agent workloads rather than interactive chat.

As we move deeper into 2026, expect to see the emergence of specialized agent platforms targeting vertical-specific problems—agents purpose-built for financial services, healthcare, customer support, and software engineering. The current wave of horizontal frameworks is maturing into a segment where vertical depth and domain expertise become competitive advantages.

Agent Harness covers the most important developments in AI agent engineering and deployment. Subscribe for daily roundups tracking frameworks, models, tools, and best practices shaping the future of intelligent automation.